· AI Infrastructure · 12 min read

Scaling Recommendations with TPU SparseCore

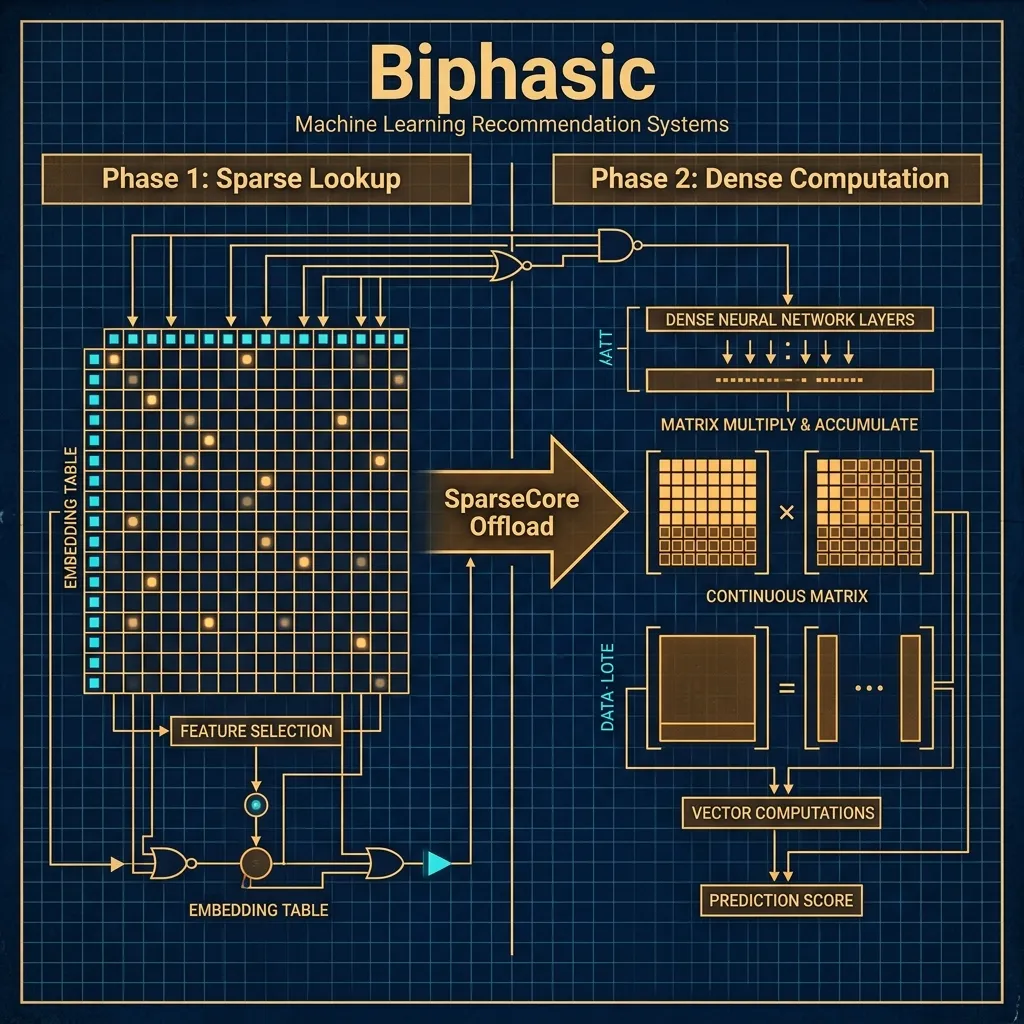

TPU SparseCore: How specialized silicon solves the massive memory bottleneck of embedding lookups in large-scale recommendation models.

- Memory Bandwidth is the Real Bottleneck: Recommendation systems are bound by memory access patterns (sparse lookups), not raw compute power.

- SparseCore Solves Discontinuous Lookups: Google's custom SparseCore silicon offloads embedding lookups from main TensorCores, preventing pipeline stalls.

- Architectural Co-Design Wins: Decoupling sparse memory fetching from dense arithmetic logic provides a multi-fold acceleration for serving large-scale recommendation models.

Let us set the scene. You are staring at a Grafana dashboard at two in the morning. Your retail application is under heavy holiday load. The primary product recommendation API is spiking past five hundred milliseconds of latency. You check the standard metrics. CPU utilization is nominal. Network I/O is normal. You examine the expensive GPU fleet serving the deep learning recommendation model. The compute units are nearly idle, resting comfortably at an agonizingly low fifteen percent utilization. Yet, the latency is astronomical.

You are experiencing the silent bottleneck of modern machine learning infrastructure. You are not starved for compute. You are entirely bottlenecked by memory bandwidth and random access patterns.

In my experience architecting these systems, we spend a tremendous amount of time in this industry chasing floating point operations per second. We celebrate massive clusters training dense transformer networks. But when you move out of the realm of language modeling and step into the reality of personalized recommendations, the entire game shifts. Recommender systems, click-through rate prediction models, and ad-ranking algorithms are fundamentally different workloads. They are sparse, not dense.

The Anatomy of the Memory Standoff

To architect a solution, we must first deeply inspect why the hardware fails us here. When a user logs into an e-commerce platform, they bring a highly discontinuous history. They clicked on three items out of a catalog of fifty million. They belong to a specific demographic out of hundreds of overlapping categories. In machine learning, we handle this sparsity by representing categorical features using embeddings.

An embedding is simply a dense vector representation of a sparse concept. However, before you can perform the dense matrix multiplications that neural networks excel at, you must execute a lookup. You have an enormous matrix. Sometimes these tables exceed several terabytes in size. For every single batch of users, you need to pull the specific vector for item ID 4599201 and the vector for user ID 99281.

On traditional GPU architectures, this lookup procedure is disastrous. GPUs are designed to ingest large continuous blocks of data and multiply them together rapidly. They demand predictable data access patterns. Embedding lookups are the exact opposite. They are entirely random memory accesses.

When you ask a standard hardware accelerator to fetch random rows from its High Bandwidth Memory, you break all the rules of cache locality. The hardware is forced to read tiny chunks of data from disparate physical memory modules. The processing cache misses repeatedly. The incredibly powerful arithmetic logic units sit idle, waiting for the memory controllers to fetch the next set of vectors.

The Parameter Server Fallacy

Before hardware manufacturers recognized the sparse memory lookup problem, the standard industry pattern was to deploy asynchronous parameter servers. If you operated recommendation engines in the early twenty-teens, you likely built these architectures. You would run giant CPU clusters whose entire job was simply maintaining the massive embedding matrices in system RAM. Your dedicated GPU workers would asynchronously send Remote Procedure Calls over a standard Ethernet network whenever they needed an embedding.

This architecture ultimately failed as models scaled up to the billion-parameter scale. The network packet overhead became the limiting factor. When you request millions of random floats over standard TCP protocols, you incur massive serialization penalties. The GPUs ended up spending ninety percent of their time waiting for the network switch to route parameter requests. You were essentially using multi-thousand dollar compute accelerators as very expensive network polling devices.

Attempts were made to implement software caching layers. Engineers built complex frequency-based caching logic directly onto the GPU to store the most commonly accessed groupings locally while asking the parameter server for rare embeddings. It worked poorly. The system complexity skyrocketed. Operational overhead was brutal. The fundamental reality remained unbroken. Standard networks and standard CPUs were too slow to feed the beast. Moving the storage locally directly onto the accelerator fabric, completely bypassing standard networking entirely, became the only viable path forward for the industry.

Enter Ironwood and Dedicated Silicon

This is the exact hardware reality that Google engineers faced when trying to serve billion-user applications. If you want to scale recommendations, you cannot simply throw more dense compute at the problem. You need hardware explicitly co-designed for sparsity.

The TPU v7 architecture, codenamed Ironwood, introduces a significant paradigm shift with its defining feature. That feature is the SparseCore.

Google recognized that treating embedding lookups as an afterthought was fundamentally limiting the entire machine learning community. Instead of just adding more memory to the existing TPU architecture natively, they designed an entirely separate, asynchronous processor that sits physically alongside the primary dense matrix multiplication units. The SparseCore is customized silicon engineered exclusively to execute embedding lookups, memory gathers, and complex reduction operations.

Think of the SparseCore as an incredibly aggressive, highly specialized memory manager. While the main TPU cores are busy crunching the previous batch’s dense neural network layers, the SparseCore is asynchronously fetching the embeddings for the next batch immediately. It operates on its own dedicated memory channels. It executes optimized instructions for scatter-gather operations natively. Most importantly, it routes these lookups across the internal TPU interconnect without interrupting the primary compute units.

By decoupling the sparse memory fetching from the dense arithmetic logic entirely, the Ironwood architecture eliminates the stalling that plagues traditional accelerators. The primary cores never wait for memory. They simply receive a neatly packaged tensor of resolved embeddings just in time for the dense layers to process them.

Bridging Hardware and Software with JAX

Hardware innovation is completely useless if the practitioner cannot access it practically. We do not want to write custom low-level integration kernels on weekend deployments just to look up user profiles. This is where the beauty of the JAX ecosystem comes into play. The compiler stack, Accelerated Linear Algebra, has been heavily updated to understand the SparseCore hardware topology out of the box.

Let us walk through the narrative implementation locally. We are not just going to run a script blindly. We are going to build a functional system that maps our logical problem to the physical hardware intelligently.

In a basic JAX implementation layout, an embedding lookup might look like a simple array indexing operation. To route this operation to the customized hardware correctly, we leverage a specialized configuration called SparsecoreConfig. This specific object tells the compiler to physically map the embedding table to the dedicated Static Random-Access Memory of the SparseCore.

import jax

import jax.numpy as jnp

from jax.experimental import sparsecore

# Define the dimensionality of our business problem natively

vocab_size = 50_000_000 # 50 million items in the product catalog

embedding_dim = 128

batch_size = 8192

# Configure the SparseCore hardware mapping securely.

# This tells XLA to allocate this table on the SparseCore memory hierarchy.

config = sparsecore.SparsecoreConfig(

table_name="item_catalog_embeddings",

embedding_dimension=embedding_dim,

vocabulary_size=vocab_size,

partitioning_strategy=sparsecore.PartitioningStrategy.MODULAR

)

def init_embedding_table(key):

# The compiler reads the sparsecore_placement annotation physically

# and routes the memory allocation to the dedicated silicon natively.

with sparsecore.sparsecore_placement(config):

return jax.random.normal(key, (vocab_size, embedding_dim))

def sparse_lookup_step(embedding_table, input_ids):

# This explicit gather operation will be executed by the hardware

# completely asynchronously from the main TPU compute core.

return sparsecore.gather(embedding_table, input_ids, config)Let us pause and examine what is happening mathematically here. We are not writing boilerplate networking code to fetch gradients across a distributed cluster. We are defining a declarative configuration that the local compiler understands deeply.

The PartitioningStrategy.MODULAR flag is particularly critical for massively large deployments. When you have fifty million items, the entire table will easily exceed the physical capacity of a single TPU chip natively. The modular strategy tells the underlying compiler mechanism to automatically shard this massive table across the high-speed internal network of the TPU pod securely. The developer does not manually manage the routing tables personally. The compiler handles the collective network communication entirely under the hood.

Manual Sharding for Extreme Edge Cases

While SparsecoreConfig handles the overwhelming majority of standard use cases beautifully, there are specialized scenarios where the practitioner absolutely needs manual control over the memory layout directly. Some recommendation topologies feature extreme imbalances mathematically. Perhaps one categorical feature only has ten possible values, while another features completely unbounded growth passing ten billion entries.

In these extreme deployment cases, automatic modular partitioning might accidentally create inefficient memory fragmentation internally. This is exactly where advanced JAX primitives like jax.shmap provide a necessary escape hatch for the seasoned operator.

from jax.experimental import shard_map

# Defining a manual mesh topology explicitly over the TPU Pod architecture

mesh = jax.sharding.Mesh(jax.devices(), ('data', 'model'))

@shard_map.shard_map(mesh=mesh, in_specs=..., out_specs=...)

def custom_sparse_kernel(local_embeddings, local_ids):

# Practitioners can define exact routing logic here manually

# overriding the default compiler heuristics entirely.

# This specifically allows for custom collision resolution

# algorithms intelligently optimized for the serving pipeline locally.

passUsing jax.shmap drops you significantly closer to the metal natively. You explicitly define how the multi-dimensional tensor maps physically to the two-dimensional mesh of TPU runtime chips. It is a highly powerful tool when you are squeezing the absolute final drop of performance out of a specialized click-through rate model locally. You embrace the complexity only when the automated routing heuristics of the compiler fail to provide the strictly necessary latency reduction.

The Economics of a Five-Fold Acceleration

Why does this specific hardware acceleration truly matter logically? Engineering vanity metrics mean absolutely nothing operationally if they do not directly translate to concrete business leverage eventually. Using SparseCores typically results in a sheer five-fold acceleration for embedding-heavy workloads natively. But the measurable speedup is only half the relevant story.

When you successfully reduce your embedding lookup latency from one hundred and fifty milliseconds to thirty milliseconds securely, you are instantaneously unlocking a massive latency budget. In the strictly governed service level agreements of modern ad serving or real-time catalog recommendations, you typically have around two hundred milliseconds total to return a finalized result to the requesting user. If the majority of that time is unfortunately eaten by simply moving data around in remote memory, you only have fifty milliseconds left for actual machine learning intelligence. You are permanently forced to use highly shallow, conceptually simplistic models natively.

By strategically freeing up those precious milliseconds, you structurally change the architectural paradigm. You can now reliably pipe those efficiently resolved embeddings into significantly heavier models directly. You can seamlessly incorporate heavy multimodal context right into the very same pass.

For example, instead of relying purely on purely statistical historical click data natively, you can now feed the highly precise SparseCore outputs into a lightweight multimodal model like Gemini 2.5 Flash seamlessly. You might smoothly pass the user’s localized embedding alongside real-time image features extracted directly from the product they are hovering over currently. This fundamentally allows the model to internally reason about visual similarity and nuanced semantic context on the fly smoothly. You could never previously afford the raw compute time strictly needed for multimodal sequence ranking if you were permanently stalled continuously on localized memory lookups. We are fundamentally raising the structural ceiling on algorithmic recommendation quality comprehensively. The raw infrastructure improvement directly enables a highly dramatic geometric increase in logical model complexity directly at the user application layer.

Operating the Abstraction safely with Node Resilience

When you reliably scale out to thousands of compute nodes actively on Vertex AI, hardware failure rapidly transitions from a rare anomaly to an absolute mathematical certainty. A worker node will inevitably drop off the network sporadically. A memory module will unexpectedly produce a parity error natively. In standard synchronous training loops, this efficiently causes a catastrophic pipeline stall locally. The entire replica group must completely halt, restore state from the latest Google Cloud Storage pipeline checkpoint natively, and painfully rebuild the computational graph locally.

However, when working closely with ultra-large sparse models seamlessly, restoring a multi-terabyte parameter state sequentially can take twenty minutes, utterly destroying operational service level objectives entirely. Building robustly resilient continuous training architectures on Vertex AI absolutely demands highly asynchronous state checkpointing smoothly.

JAX facilitates this workflow by allowing SparseCores to save their High Bandwidth Memory state to GCS parallel object storage in the background, isolated from the primary active training loop. This ensures that when a hardware fault occurs, the cluster can isolate the degraded node, remap the SparseCore memory topology onto the healthy replicas, and resume training operations with minimal downtime.

Final Alignment on the Evolving Infrastructure Layer

We are actively witnessing a thoroughly fascinating divergence currently in hardware architecture. For agonizing years, the collective industry chased purely single-threaded scalar performance optimally. Then, we pivotally chased massive multi-dimensional arrays of dense matrix multiplication units predictably for convolutional networks and large language models respectively. Now, as the highly concrete economic value of specialized personalization models vastly eclipses almost practically every other pure machine learning application natively, the underlying physical hardware is finally bending correctly toward the strictly specific mathematical needs of unstructured sparse data sequentially.

The intentional introduction of the advanced SparseCore is a deeply validated practical acknowledgment finally that structurally moving data logically is frequently significantly more economically costly natively than purely computing it locally. By safely providing highly dedicated structural silicon seamlessly and seamlessly pairing it completely with highly intelligent tightly integrated compiler support seamlessly, the painful operational friction of broadly scaling recommendations globally is drastically reduced optimally.

You are strictly no longer fighting against the underlying physical hardware comprehensively. You are successfully no longer securely writing heavily custom networking flow control primitives just to fetch user profiles manually. You simply define your precise logical data schema mathematically. You explicitly declare your hardware topology cleanly. Accelerated Linear Algebra structurally generates the definitively optimal mathematical routing locally. You directly back the critical latency budget absolutely required to natively build genuinely intelligent smoothly responsive local experiences rapidly for your millions of users confidently. The deep physical hardware infrastructure gracefully safely fades practically right into the ambient background locally, and you are finally smoothly left to safely strictly focus purely on the core business application logic optimally. That specific outcome is exactly the ultimate promise eventually of cleanly well-designed scalable operational abstraction safely.