· Agentic AI · 8 min read

The Human-as-Orchestrator: Evolving HITL

Designing systems where humans provide strategic intent and override at checkpoints.

- The traditional Human in the Loop model, where humans manually review and approve individual AI actions, fundamentally limits the scale and velocity of autonomous systems.

- Enterprises must evolve from Human in the Loop to Human on the Loop, shifting the human role from a manual reviewer to a high-level strategic orchestrator.

- Effective agentic architectures require clearly defined strategic checkpoints where human operators inject intent, resolve high-level ambiguity, and manage exceptions, rather than micro-managing execution steps.

- This paradigm shift is absolutely essential for enterprises to realize the massive ROI of Agentic AI without sacrificing governance, security, or operational safety.

- The interfaces we use to interact with AI must evolve from simple chat boxes and approval dashboards into comprehensive, telemetry-rich command centers.

- Scaling autonomous workflows requires abandoning the "Trust but Verify" fallacy in favor of automated telemetry, deterministic sandboxes, and mathematically bounded risk envelopes.

For the last several years, enterprise AI strategy has clung tightly to a comforting security blanket. We call it Human in the Loop.

The premise sounds incredibly responsible. We deploy powerful AI models, but we ensure a human reviews the output before any action is taken. A customer support draft is generated by the model, but a human agent clicks the send button. A code snippet is authored by an AI assistant, but a senior developer carefully reviews the Pull Request before merging it.

This model was necessary when large language models were erratic and highly prone to hallucination. But as we transition rapidly into the era of agentic workflows (where systems are designed to reason, plan, and execute multi-step processes autonomously) the traditional Human in the Loop model becomes a fatal bottleneck.

If you deploy a swarm of specialized agents capable of executing ten thousand complex tasks an hour, but you require a human operator to manually approve each individual step, your throughput is artificially limited to human reading speed. You have built a Ferrari engine and installed it in a traffic jam.

As I argued extensively in Governance: The Human in the Loop Fallacy, scaling human reviewers linearly with AI output destroys the unit economics of automation. It defeats the entire purpose of deploying the technology.

We need a fundamental evolution in how we design these systems. We must move the human from being in the loop to being on the loop. We must design our software architecture for the Human as Orchestrator.

The Physics of the Bottleneck

Consider a multi-agent system designed specifically for automated threat hunting in cybersecurity.

In a traditional Human in the Loop setup, an agent scans network logs, identifies a potential anomaly, drafts a mitigation plan (for example, blocking a specific IP address across all firewalls), and sends an alert to a security analyst. The analyst reads the alert, reviews the logs manually, agrees with the plan, and clicks a button to approve the action.

This process is linear, synchronous, and incredibly slow. The human is a blocking function in the execution path. While the human is reading the logs, the threat actor is actively exfiltrating data.

In an orchestrated, Human on the Loop setup, the system architecture changes fundamentally. The agents are granted explicit, mathematically bounded autonomy to act within a predefined envelope of acceptable risk.

The human’s role shifts entirely from execution to strategy.

Designing Strategic Checkpoints

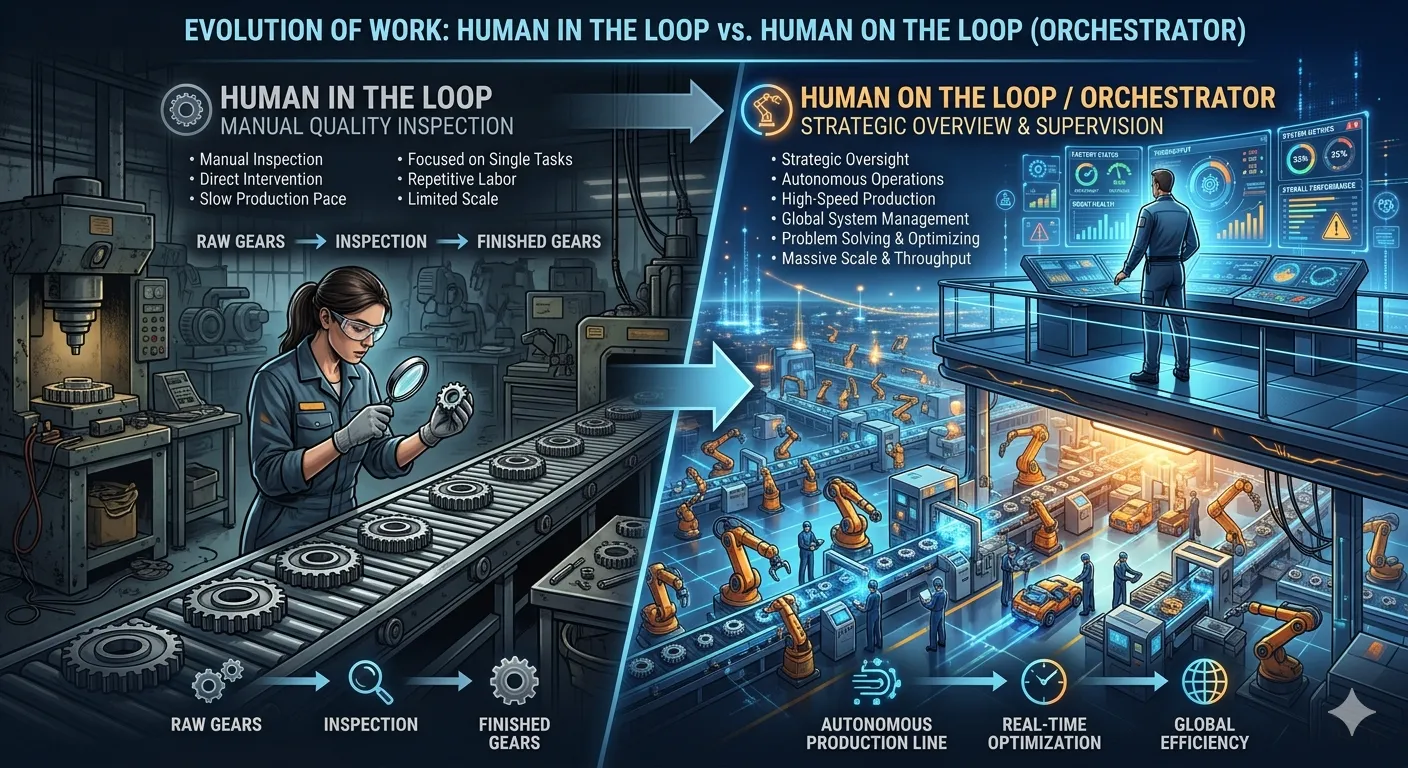

Explainer Diagram: An engaging conceptual infographic comparing “Human in the Loop” (a worker manually inspecting gears on a slow assembly line) versus “Human on the Loop / Orchestrator” (a commander looking over a massive, autonomous, glowing factory from a high-tech control deck).

Explainer Diagram: An engaging conceptual infographic comparing “Human in the Loop” (a worker manually inspecting gears on a slow assembly line) versus “Human on the Loop / Orchestrator” (a commander looking over a massive, autonomous, glowing factory from a high-tech control deck).

To build a truly orchestrated system, you do not simply remove human oversight. You elevate it. You replace manual, low-level approval gates with high-level strategic checkpoints.

This requires engineering the agentic workflow to operate completely independently until it hits a hard boundary of high ambiguity, extreme financial risk, or a need for strategic intent that cannot be derived from its prompt instructions.

1. Injecting Strategic Intent

Instead of reviewing drafts, the orchestrator sets the parameters and goals of the campaign.

Think of it exactly like managing a team of highly capable senior engineers. You do not stand over their shoulders and review every keystroke they type. You define the sprint goals, you allocate the computational budget, and you clearly define the definition of done.

In our threat hunting example, the orchestrator does not approve individual IP blocks. Instead, they set the strategic posture for the system. They issue a command: “For the next forty-eight hours, escalate all automated responses related to the newly discovered CVE vulnerability, but maintain standard autonomous blocking for all known botnet signatures.”

The human provides the broader business context that the agents fundamentally lack.

2. Managing Exceptions, Not The Rule

Agentic systems should handle the vast majority of routine, predictable workloads completely autonomously. The human orchestrator exists strictly to handle the edge cases.

This requires building incredibly robust confidence scoring and anomaly detection directly into the agent loop.

If an agent encounters a scenario it has never seen before, or if its internal “Judge Agent” (as we discussed deeply in Multi-Agent Conflict Resolution) detects high uncertainty in a proposed action, the system pauses. It stops execution and formally requests human intervention.

The human is no longer a reviewer of the mundane; they are the ultimate exception handler for the highly complex. They are called in only when the machine admits it does not know the answer.

3. Auditing the Reasoning Trajectory

When the system does operate autonomously, the orchestrator does not waste time looking at the final output. They audit the reasoning trajectory.

If an agentic system makes an autonomous decision that results in an unexpected outcome, the orchestrator must be able to review the underlying logic. They review the internal scratchpad memory of the agent, the specific tool calls it made, the API responses it received, and the exact context window that led to the decision.

They then adjust the system prompts, update the available tools, or refine the boundary constraints to prevent a recurrence. The orchestrator is tuning the machine itself, not performing the machine’s task.

The Fallacy of ‘Trust But Verify’ at Scale

A common counter-argument to full autonomy is the doctrine of “Trust but Verify.” The idea is that we let the agents run, but we have a human sample the outputs to ensure quality.

At enterprise scale, this falls apart completely. When your agentic mesh is making fifty thousand decisions a day, human verification of even a five percent sample size requires an entire department of manual labor. Furthermore, human reviewers suffer from severe alarm fatigue. When a reviewer sees one hundred correct actions in a row, they will inevitably rubber-stamp the one hundred and first action, even if it is a catastrophic error.

Verification must be automated. We must replace human sampling with deterministic testing, programmatic invariants, and secondary “Auditor Agents” that run parallel to the primary execution path. The human orchestrator reviews the aggregated metrics generated by these Auditor Agents, not the raw execution data.

The Orchestrator’s Toolbelt: Sandboxes and Simulators

To be an effective orchestrator, the human needs an entirely new set of tools. They cannot simply deploy new system prompts directly into the live production environment and hope for the best. The risk of unintended behavioral drift is far too high.

Before a human orchestrator authorizes a change in agent behavior, they must test it extensively. This is where high-fidelity simulation environments become absolutely critical to the enterprise stack.

The orchestrator utilizes a sandboxed simulation engine that perfectly mirrors the production environment, complete with synthetic data and mock API endpoints. When they tweak a prompt or adjust a confidence threshold, they run thousands of simulated scenarios against the agent in a matter of seconds. The simulation engine provides a detailed report showing exactly how the behavioral change impacts the agent’s actions, cost burn rate, and error frequency.

Only when the orchestrator is absolutely certain that the new parameters act predictably in the simulator do they push the configuration changes to the live production mesh. This transforms the orchestrator from a passive reviewer into an active engineer of systemic behavior.

Building the Agentic Telemetry Dashboard

This massive shift in operating models requires entirely new software interfaces. We are moving far away from simplistic dashboards that show lists of “Pending Approvals.” We are moving toward complex command centers that show deep system telemetry and boundary exceptions.

The user interface must allow the orchestrator to visualize the entire agent mesh in real-time. It must display the current token burn rate, the latency of tool calls, and the confidence scores of the active decision loops.

It must allow them to adjust risk thresholds dynamically, pause specific execution branches, and trace complex execution paths. If an agent goes rogue or begins hallucinating, the orchestrator must have a physical “kill switch” that severs the agent’s API access instantly. It is less like an email inbox and much more like an air traffic control radar screen.

The enterprise that wins the next decade will not be the one with the most humans in the loop clicking approve. It will be the enterprise that most effectively leverages a small, highly skilled team of human orchestrators to command a massive, fully autonomous agentic workforce.

We must stop treating humans as biological API endpoints designed to check boxes. We must elevate them to strategists, allowing the machines to do the heavy lifting while the humans chart the course.