· AI Engineering · 8 min read

RadixAttention vs PagedAttention: The New Frontier in Context Management

A deep dive into the mechanics of SGLang's RadixAttention and why it represents a breakthrough for multi-turn agentic workflows compared to vLLM's PagedAttention.

TL;DR: As multi-turn conversations and complex agentic workflows become the norm, managing the KV cache effectively is the primary bottleneck in LLM serving. While vLLM’s PagedAttention revolutionized the field by solving memory fragmentation, SGLang’s RadixAttention takes it a step further by treating the cache as a tree of shared prefixes. This allows for zero-cost sharing of system prompts and efficient branching in multi-agent loops, representing a fundamental shift from stateless to stateful serving infrastructure.

Let us talk about the physics of serving large language models. If you have moved past the stage of calling external APIs and are now running your own inference servers, you know that the bottleneck is not compute; it is memory. Specifically, it is the KV (Key-Value) cache.

Every time a model generates a token, it needs to look at all the previous tokens in the conversation. To avoid recalculating the attention for those previous tokens on every step, we store their Key and Value vectors in memory. This is the KV cache.

In a multi-turn conversation or a complex agentic workflow, the KV cache grows rapidly. If you are not careful, it will consume all the VRAM on your GPU, leaving no room for the model weights themselves or for serving other users.

A year ago, the gold standard for managing this memory was PagedAttention (which we compared previously to pagedattention vs ringattention), introduced by the vLLM (v0.4.x or later) project. It was a massive breakthrough. But as we move into the era of complex, multi-agent reasoning loops, a new contender has emerged: RadixAttention, the core innovation behind the SGLang (v0.2.x or later) project.

To understand why this matters, we need to look at how these two systems manage the history of your conversation.

PagedAttention: The Virtual Memory Solution

To understand PagedAttention, it helps to remember how operating systems handle RAM.

In the early days of computing, programs expected to get a single, contiguous block of memory. If the memory was fragmented, the program would fail, even if there was enough total free memory scattered around. Operating systems solved this by introducing virtual memory and paging. They divided physical memory into small, fixed-size pages and mapped the program’s virtual address space to those pages.

PagedAttention does the exact same thing for the KV cache of an LLM.

Before PagedAttention, systems allocated a contiguous block of memory for the maximum possible context length of a request. If the user only sent a ten-token prompt, the rest of the allocated memory was wasted. This was called internal fragmentation.

PagedAttention divides the KV cache into blocks. Each block contains the KV vectors for a fixed number of tokens (say, 16 tokens). As the conversation grows, the system allocates new blocks on demand from a memory pool. These blocks do not need to be contiguous in physical VRAM. The system maintains a mapping table, just like an OS page table.

This allowed vLLM to increase throughput by 2-4x because it eliminated memory waste and allowed for much higher batch sizes. It was a game-changer.

But PagedAttention was designed for a specific workflow: a user sends a prompt, the model generates a response, and the session ends. It treats every request as an independent sequence of tokens.

The Agent Problem: Shared Prefixes

Now let us look at what happens when we build agents.

In an agentic workflow, we often have a massive system prompt that defines the agent’s persona, tools, and safety rules. This system prompt might be 2,000 tokens long.

If we are running a swarm of ten agents, all using the same system prompt, a standard PagedAttention system will allocate memory for that 2,000-token prompt ten times, once for each agent’s request.

Even worse, imagine a multi-turn conversation where an agent is trying to debug code.

- Turn 1: “Here is the code. Why does it fail?” (Model generates 500 tokens).

- Turn 2: “Okay, now try changing line 10.” (Model needs to read the code, the first response, and the new instruction).

In a naive system, when Turn 2 arrives, the system calculates the KV cache for the entire history again, even though most of it was processed in Turn 1.

PagedAttention projects introduced “Prefix Caching” to solve this, but it is typically implemented as a simple hash map of the exact prefix string. If the prefix matches exactly, it can reuse the cache. But it breaks down if there are minor variations or if the conversation branches.

RadixAttention: The Tree of Life

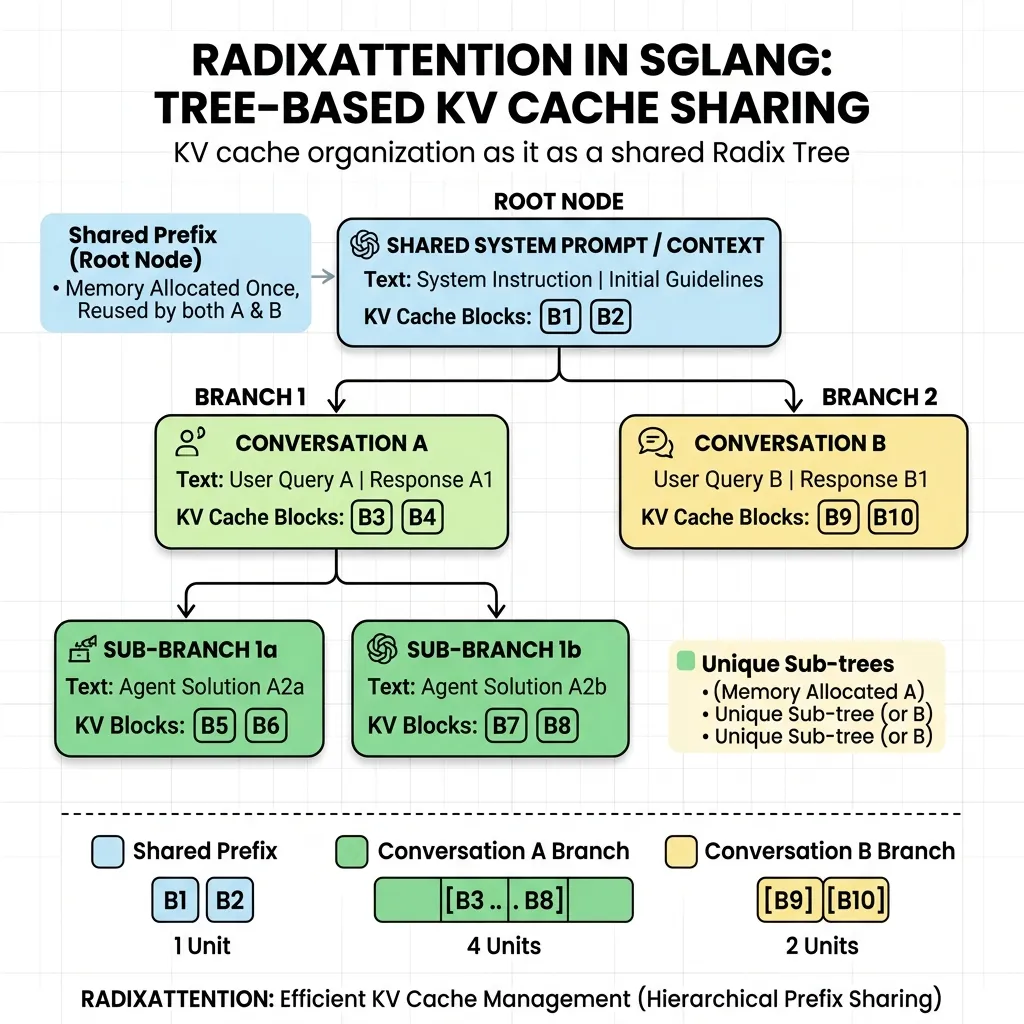

This is where SGLang’s RadixAttention steps in. It does not treat the KV cache as a flat list of pages. It treats it as a Radix Tree (or compressed trie).

A radix tree is a data structure where the edges are sequences of characters (or in this case, tokens) rather than single characters. It is incredibly efficient for storing sets of strings that share common prefixes.

In SGLang, the server maintains a global radix tree of all KV cache blocks across all requests that have ever been processed.

When a new request arrives, the system does not just look for an exact match of the whole prompt. It traces the prompt tokens through the radix tree to find the longest common prefix that is already present in the cache.

Let us look at the agent scenario again.

- Root: System Prompt (Cached).

- Branch A: Agent 1’s specific task and conversation history.

- Branch B: Agent 2’s specific task and conversation history.

Because both branches share the same root (the system prompt), RadixAttention only stores the KV cache for the system prompt once. Both agents share that memory. The system only allocates new blocks for the unique parts of Branch A and Branch B.

But it gets better. RadixAttention supports Forking.

Imagine an agent exploring two different solutions to a coding problem. It generates Solution A, evaluates it, and then wants to go back to the state before Solution A and try Solution B.

In a PagedAttention system with simple prefix caching, moving back to the previous state usually means recalculating the attention because the specific sequence changed.

In RadixAttention, the system simply creates a new branch in the tree from the point of divergence. The history up to the fork point is shared. The memory for Solution A remains in the tree (until evicted by a garbage collector), and the memory for Solution B is allocated on a new branch. The agent can jump between branches with zero recalculation cost.

A Tale of Two Swarms

To understand the real-world impact, consider a team of engineers using an AI swarm to translate a legacy codebase from Java to Go. The system prompt, containing the translation rules and Go best practices, is 4,000 tokens. The source file being translated is 2,000 tokens.

In a PagedAttention setup, if you spin up 5 parallel agents to work on different parts of the translation, the server needs to allocate KV cache for 6,000 tokens for each agent. That is 30,000 tokens of memory immediately consumed before a single token of Go code is generated.

With RadixAttention, the 6,000 tokens representing the system prompt and the source file are loaded into the tree once. The 5 agents are simply 5 active leaves sprouting from that same branch. The total memory consumed at the start is just 6,000 tokens, plus a tiny amount of overhead for the tree pointers. You have just reduced your memory footprint by 80%.

This is not just an optimization; it is the difference between being able to run the swarm on a single GPU versus needing a multi-node cluster. It allows you to build denser, more capable swarms without scaling your hardware costs linearly.

The Eviction Policy: LRU on a Tree

Because the radix tree can grow to fill all available VRAM, SGLang implements an eviction policy. But unlike PagedAttention, which evicts pages, RadixAttention evicts nodes in the tree.

It uses a modified Least Recently Used (LRU) strategy. If memory is full, it finds the leaf nodes that have not been accessed recently and evicts them. Because the data is structured as a tree, evicting a leaf node does not affect the shared parent nodes. The system prompt (the root) remains cached because it is accessed by every new request, while the details of a specific conversation from an hour ago are dropped.

Conclusion: The Infrastructure of Thought

If you are building simple chatbots, PagedAttention is still fantastic. It is mature, robust, and widely supported.

But if you are building the next generation of AI systems, systems where autonomous agents collaborate, explore branching hypotheses, and maintain long-running state, you need to look at RadixAttention.

By moving context management from a flat paging system to a structured tree system, SGLang has provided the infrastructure required for complex reasoning. It treats conversation history not as a disposable stream of text, but as a living structure to be explored and reused.

The choice between these two is not just a choice between two libraries. It is a fundamental choice between two different views of what AI is: a simple single-shot text generator, or a highly stateful, reasoning agent.