· AI Infrastructure · 10 min read

xAI Grok Architecture: The Case for JAX and Rust

Explore the xAI Grok model training architecture. Discover why xAI chose JAX and Rust over PyTorch, their SLA/uptime guarantees, and how it impacts extreme-scale training.

TL;DR: The decision by xAI to build their massive training stack on JAX and Rust rather than the industry-standard PyTorch represents a significant shift in frontier AI development. JAX provides the pure functional programming model and XLA compilation needed for extreme-scale matrix multiplication, while Rust handles cluster orchestration and data loading without Python overhead. This combination offers maximum throughput but demands a rare intersection of skills, challenging the dominance of legacy frameworks.

Let us talk about what it takes to train a frontier model today. We are not talking about fine-tuning a small model on a single GPU in your workstation. We are talking about orchestrating tens of thousands of GPUs, moving petabytes of data across InfiniBand fabrics, and keeping the system running without a crash for weeks at a time.

When xAI announced their Grok models, the community was interested in the performance benchmarks. But if you are a practitioner, you were looking at the infrastructure. You were looking at how they built it.

For the last few years, the standard answer for deep learning has been PyTorch. It is a beautiful framework. It gives you dynamic graphs. It has a massive ecosystem. If you are starting a new AI project today, PyTorch is the default choice.

But xAI did not choose the default. They built their training stack on a combination that seems unusual at first glance: JAX and Rust.

To understand why they made this choice, we need to look past the hype and examine the operational realities of extreme-scale training.

The Case for JAX: Compiling the World

To understand JAX, you have to understand the difference between how Python works and how high-performance hardware works.

Python is an interpreted language. It executes line by line. This is great for developer velocity because you can change things on the fly and see the results instantly. PyTorch embraces this with its eager execution mode. When you run a PyTorch operation, it happens right then.

But fixed-function hardware like a TPU or a modern GPU does not like line-by-line execution. It wants to see the whole graph of operations ahead of time so it can optimize the memory layout and the operator fusion.

JAX (v0.4.x or later) takes a different approach. JAX is not really a deep learning framework in the traditional sense. It is a system for expressing numerical transformations on arrays, backed by the XLA (Accelerated Linear Algebra) compiler.

In JAX, you write Python code that looks like NumPy. But when you execute it, JAX does not just run the Python. It traces your function and compiles it into a highly optimized set of machine instructions for your specific hardware.

This compilation step gives you two massive advantages at scale.

First, operator fusion. If you have a function that adds two matrices lines and then multiplies the result by a third matrix, standard Python will allocate memory for the intermediate result. JAX sees the whole sequence. The XLA compiler can fuse those operations together, keeping the data in the local registers of the GPU processing cores and avoiding expensive round-trips to global VRAM.

Second, reproducible randomness. In distributed training, keeping random seeds synchronized across ten thousand nodes is a nightmare. PyTorch handles this with stateful random number generators. JAX treats randomness as pure functions. You pass the random key as an argument, and you get a new key and the random numbers as output. It is deterministic. If a node fails and you need to restart the training from a checkpoint, you can guarantee that the re-run will produce the exact same numbers. This is a superpower for debugging cluster failures.

To understand the value of this, imagine a scenario that plays out regularly in large labs. You are training a model that costs ten thousand dollars an hour in compute. At step fifty-thousand, a rack of GPUs overheats and fails. The system automatically reloads the checkpoint from step forty-nine thousand and resumes. But when you look at the loss curve a day later, it has diverged. The model is ruined. You spend a week digging through logs only to discover that the random number generator state on the restarted nodes did not perfectly match the rest of the cluster after the reload. The dropout masks were slightly different.

In a system like PyTorch, where the random state is hidden inside the global environment, these kinds of silent, non-deterministic bugs are incredibly hard to track down. In JAX, because the random key is just another piece of data passed explicitly from function to function, the execution is perfectly reproducible. You can recreate the exact failure state on a single machine for debugging. This functional purity also makes it ideal for implementing complex low-level optimizations, such as micro-scaling for quantization.

The Rust Connection: The System Primitive

If JAX is the brain of the training stack, Rust is the nervous system.

You might wonder why you need Rust if JAX is handling the matrix multiplications. The answer is everything else that happens during training.

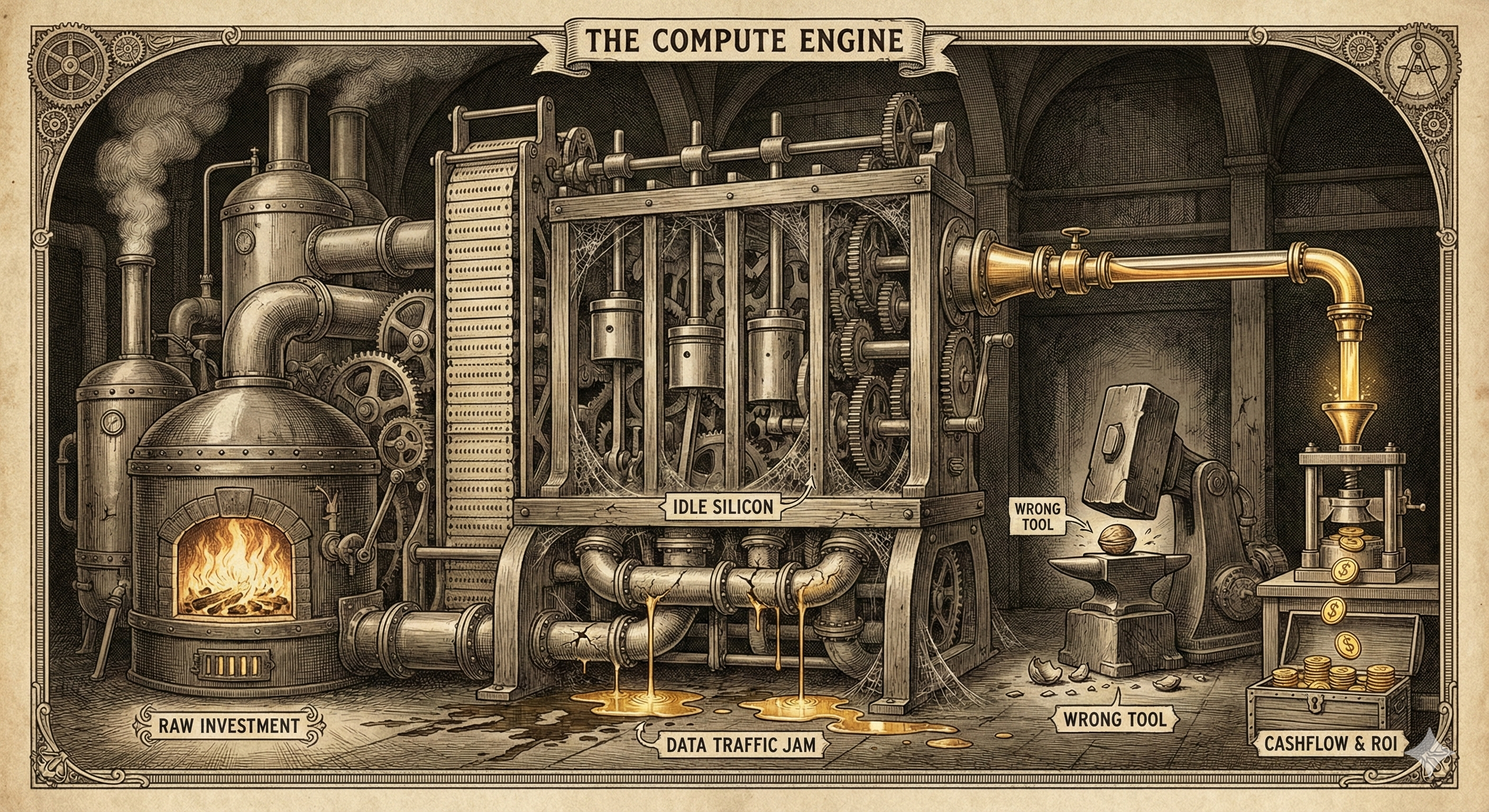

Training an LLM is not just about math. It is about data loading. You have to feed trillions of tokens into the cluster. If your data loader is slow, your expensive GPUs will sit idle, waiting for the next batch of tokens. This is called being “IO bound,” and it is the fastest way to blow your training budget.

Python is notoriously bad at multi-threaded data processing because of the Global Interpreter Lock (GIL). You can use multi-processing, but moving large tensors between Python processes incurs massive serialization overhead.

Rust ignores the GIL because it does not have one.

Rust gives you fearless concurrency. You can spin up dozens of threads to read data from distributed storage, tokenize it, and pack it into batches, all without worrying about memory leaks or race conditions. The type system guarantees memory safety at compile time.

But xAI did not just use Rust for data loading. They used it for cluster orchestration.

When you run a cluster of twenty thousand GPUs, hardware failures are not an exception; they are a daily occurrence. A chip fails. A network cable drops a packet. A cooling unit degrades.

If you rely on standard Python orchestration scripts, detecting these failures and rerouting traffic can take minutes. In that time, the rest of the cluster is idling, burning money.

By writing the orchestration layer in Rust, xAI can detect node failures in milliseconds. The system can instantly isolate the failing node, load the latest checkpoint to a spare node, and resume training. The overhead is minimal because the system is operating at the compiled level, not the interpreted level.

What About Golang?

Given Go’s reputation for excellent concurrency primitives (goroutines) and its dominance in cloud infrastructure (Kubernetes, Docker), you might wonder if it was considered for this stack. While there is no public record of xAI evaluating and rejecting Go, we can analyze why it falls short for this specific use case.

Go is fantastic for I/O-bound network services, but it introduces two major friction points for high-performance AI training:

- Garbage Collection Jitter: Go’s garbage collector is highly optimized, but it still introduces pauses. In a cluster of tens of thousands of GPUs operating in tight synchronization, a GC pause on a single node can create a “straggler” effect, slowing down the entire training step. Rust’s manual memory management (via its ownership model) ensures predictable, deterministic latency.

- C Interoperability Overhead: Data loading and orchestration often require talking directly to C libraries or low-level GPU APIs. Go’s

cgohas a non-trivial overhead because it must handle stack switching between Go and C. Rust’s C interop is zero-cost, making it ideal for tight integration with hardware-level libraries.

The Rust ML Ecosystem for Teams

If you are looking to replicate this stack or start using Rust for your AI infrastructure, you do not need to build everything from scratch. The ecosystem has matured significantly with several high-performance options:

- Data Processing: Polars is a blazingly fast DataFrame library in Rust that is quickly becoming the go-to alternative to Pandas for data preprocessing. DataFusion provides an extensible query execution framework.

- Data Parallelism: For CPU-bound tasks like tokenization, Rayon offers easy, data-parallel iterators that maximize multi-core performance without safety risks.

- ML Frameworks: Hugging Face has developed Candle, a minimalist ML framework in Rust focused on performance and ease of use. Another strong contender is Burn, a highly flexible deep learning framework that supports multiple backends, including WGPU for GPU acceleration.

- XLA Bindings: To bridge the gap between Rust and the JAX ecosystem, projects like xla-rs provide Rust bindings for the XLA compiler, allowing you to run XLA computations directly from Rust code.

Operational Realities: The Practitioner’s Tax

We have talked about the benefits, but we need to talk about the cost. There are no free lunches in infrastructure. If you choose to walk away from the PyTorch ecosystem, you are paying a heavy tax in two areas: talent and tooling.

The PyTorch ecosystem is massive. Every major model architecture (Llama, Mistral, Stable Diffusion) has a reference implementation in PyTorch. There are thousands of community libraries for quantization, acceleration, and evaluation. If you hit a bug, someone on stack overflow has already solved it.

The JAX ecosystem is much smaller. While Google uses it heavily internally for Gemini, the public ecosystem is just catching up. You often find yourself writing custom kernels or implementing standard algorithms from scratch because the library you need does not exist yet.

Then there is the talent problem.

There are hundreds of thousands of developers who know how to build systems in PyTorch. There are significantly fewer who know how to write clean, performant JAX code. And there are even fewer who can navigate the strict rules of the Rust compiler while understanding the nuances of deep learning systems.

By choosing JAX and Rust, xAI made a deliberate choice to trade ecosystem support for raw performance and reliability. They decided that the cost of hiring a small team of elite systems engineers was lower than the cost of GPU idle time at twenty-thousand-node scale.

Conclusion: The New Infrastructure Blueprint

The standard advice for startups is still to use PyTorch. For 95% of use cases, the developer velocity you get from the PyTorch ecosystem outweighs any performance penalties you pay at runtime.

But if you are aiming for the frontier, the game changes.

The xAI stack is a blueprint for the next phase of AI engineering. It signals a shift from treating AI as a branch of data science to treating it as a hard systems engineering discipline.

We are moving past the era where you can just write some Python scripts and hope for the best. To train the models of tomorrow, you need to understand compilation targets, memory alignment, and zero-overhead concurrency.

JAX and Rust might seem like an unusual combination today. But as context windows grow and clusters scale to hundreds of thousands of chips, they might become the only way to survive the build. It is a bet on engineering excellence over ecosystem convenience, and at the frontier scale, that is the only bet that matters.