· AI Engineering · 8 min read

The 2026 Enterprise Stack: Integrating Hardware, Agents, and Strategy

A comprehensive reference architecture linking all four pillars.

- Siloed approaches to AI design (treating infrastructure, engineering, and strategy as completely separate domains) lead inevitably to fragile, low-ROI deployments.

- The 2026 Enterprise Stack is a vertically integrated architecture where underlying hardware choices directly dictate high-level agentic capabilities.

- This comprehensive reference architecture links raw compute at the base, through vector caching and routing protocols in the middle, up to the Governance layer at the top.

- Mastering this stack requires understanding the intense friction points between the layers, rather than just optimizing within a single silo.

- Strategic goals must directly shape the engineering primitives, and hardware constraints must directly inform business expectations.

- Zero-trust security and data lakehouse architectures form the critical glue that allows the distinct layers to communicate safely at scale.

- Organizations must actively resist the fallacy of purchasing a massive, monolithic unified platform from a single vendor, favoring instead highly modular, best-of-breed components interconnected by open protocols.

For the past several weeks, we have dissected the distinct pillars of the modern AI landscape. We have explored the strategic imperative of The P&L Mandate, the physical limits of Rack-Scale AI Design, the complexities of the Agentic Software Development Lifecycle, and the mechanical engineering of Chunked Prefills.

But when viewed in isolation, these are just academic concepts. In the reality of a production environment, they are deeply intertwined dependencies.

You cannot possibly design a reliable multi-agent system without deeply understanding the KV Cache memory limits of your underlying hardware. You cannot formulate a viable business strategy for automated customer support without understanding the latency tax imposed by your vector database architecture.

The 2026 Enterprise Stack is not just a collection of disconnected software tools. It is a unified, vertically integrated organism.

Let us synthesize these four pillars into a single, cohesive reference architecture, detailing exactly how the layers interact and depend on each other for survival.

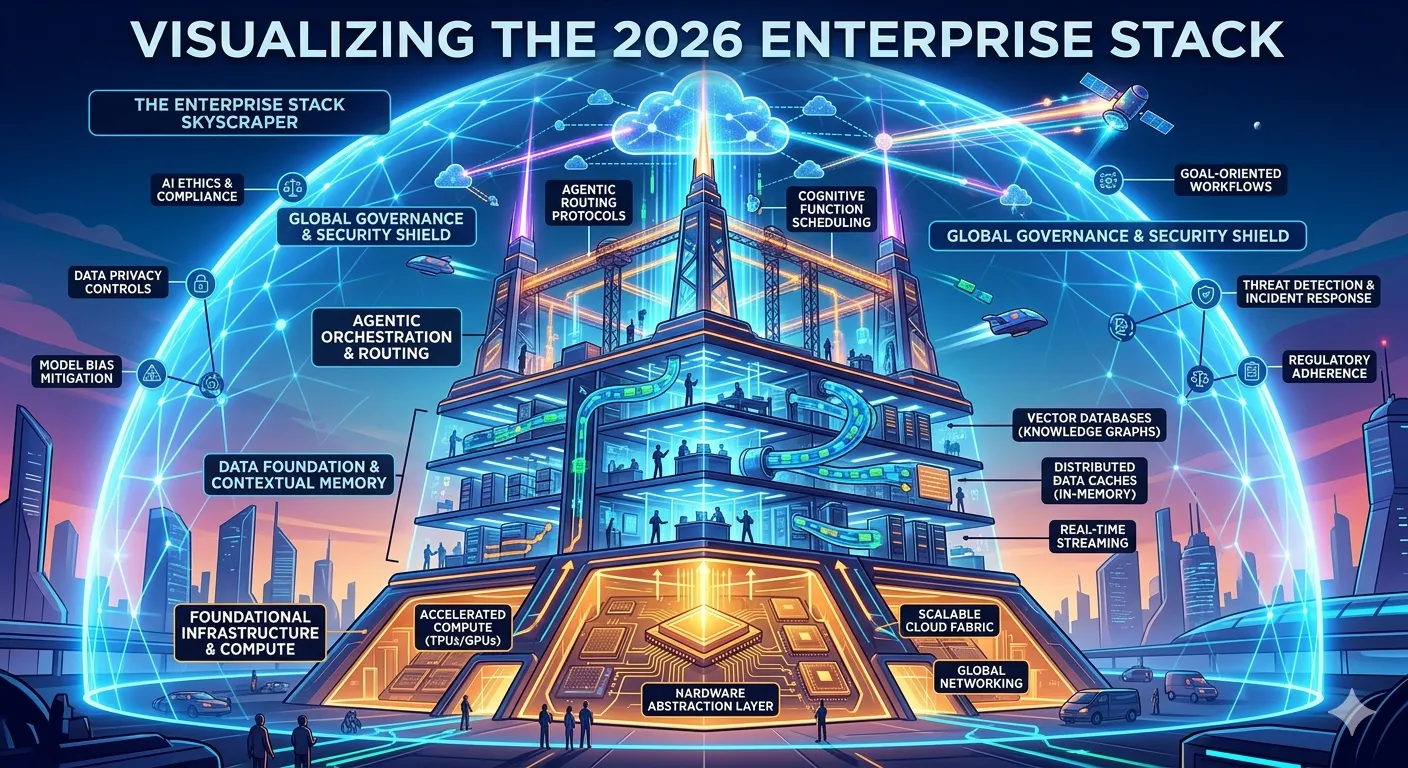

Explainer Diagram: A highly stylized, metaphorical infographic showing the “2026 Enterprise Stack” as a massive, futuristic skyscraper. The foundation is glowing TPUs/Hardware, the middle floors are bustling data caches and Vector DBs, the upper floors are Agentic routing protocols shooting data beams, and the entire structure is shielded by a glowing forcefield representing Governance.

Explainer Diagram: A highly stylized, metaphorical infographic showing the “2026 Enterprise Stack” as a massive, futuristic skyscraper. The foundation is glowing TPUs/Hardware, the middle floors are bustling data caches and Vector DBs, the upper floors are Agentic routing protocols shooting data beams, and the entire structure is shielded by a glowing forcefield representing Governance.

Layer 1: The Physical Foundation (Hardware & Networking)

Everything rests entirely on the immutable physics of compute and data movement. We have finally moved past the comfortable illusion that the cloud abstracts the hardware away from the developer.

- The Compute Substrate: We are no longer provisioning individual GPUs and hoping for the best. We are provisioning fully integrated, liquid-cooled racks. The hardware layer is defined by massive High Bandwidth Memory (HBM3e) and specialized chip-to-chip interconnects like NVLink or Google’s Inter-Core Interconnect (ICI). The limiting factor is no longer FLOPs; it is memory bandwidth.

- The Network Fabric: As discussed extensively in our analysis of Ultra Ethernet, the network is the computer. The ability to move data across one hundred thousand chips without packet loss or jitter dictates your entire training and inference ceiling. Standard TCP/IP fails here; we rely on RDMA over Converged Ethernet (RoCEv2) or proprietary fabrics.

- The Storage Wall: High-performance parallel file systems and heavily optimized object storage form the data bedrock. If your GPUs are waiting on data from a slow storage bucket, you are experiencing Badput and burning capital for zero return.

Layer 2: The Engineering Primitives (Inference & Data)

This layer translates the raw, brute-force compute of Layer 1 into usable, low-latency intelligence. It is the critical battleground where profit margins are made or lost.

- Inference Engines: This is the realm of highly optimized inference servers like vLLM, SGLang, and TensorRT-LLM. Here, we implement advanced techniques like Continuous Batching, PagedAttention for memory defragmentation, and Speculative Decoding. The singular goal is to maximize batch throughput while absolutely minimizing Time To First Token.

- The State & Memory Tier: Autonomous agents are stateless, but their memory is not. This tier includes the high-speed Embedding Caches built on Redis for real-time operational speed, backed by heavy Vector Databases for historical, exact-match analytical retrieval.

- Model Right-Sizing: We reject the monolithic model approach. This layer involves aggressively distilled 7B and 8B models handling specific, narrow classification tasks incredibly fast, while reserving massive frontier models only for deep, complex reasoning tasks. We utilize dynamic LoRA adapters to hot-swap capabilities without reloading entire model weights into VRAM.

Layer 3: The Agentic Orchestration (Logic & Protocols)

This is the central nervous system of the enterprise stack. It is where models are granted tools, persistent memory, and the autonomy to execute plans.

- The Protocol Layer: The Model Context Protocol (MCP) and A2A standards sit precisely here. They provide a standardized JSON-RPC backbone that allows agents to discover tools, access secure data sources, and hand off complex tasks to heterogeneous systems without relying on brittle, hard-coded API wrappers.

- The Execution Loop: State machines and frameworks like LangGraph manage the complex, cyclic reasoning loops. This involves carefully managed scratchpads, progressive discovery of information, and strict token boundaries to prevent context window bloat and infinite loops.

- The Supervisor Mesh: Decentralized swarms are unpredictable and dangerous in the enterprise. We implement strict Supervisor patterns where a central orchestrator delegates specific sub-tasks to highly specialized worker agents. This mesh enforces strict deadlines and resolves logical conflicts via dedicated Judge Agents.

Layer 4: The Strategic Umbrella (Governance & UI)

The top layer is where the complex underlying technology interfaces directly with the business reality and the end user.

- Generative Interfaces (GenUI): This marks the death of the static, one-size-fits-all dashboard. UI components are dynamically rendered on the client side based entirely on the specific context of the agentic interaction, providing a bespoke, highly relevant experience for every single query.

- The Human-on-the-Loop: As we explored, humans do not review drafts; they manage the system. The command center provides deep telemetry on agent drift, token cost consumption (Agent FinOps), and anomaly detection. This allows human operators to inject strategic intent at predefined checkpoints rather than serving as manual bottlenecks.

- Governance-as-Code: The NIST AI RMF is codified directly into the CI/CD pipeline. IAM policies, hard budget circuit breakers, and automated red-teaming simulations exist entirely as code. This ensures that compliance actually accelerates deployment rather than blocking it.

The Role of the Data Lakehouse in Agentic Workflows

To glue these disparate layers together, the enterprise stack heavily relies on modern Data Lakehouse architectures.

Agents operating in Layer 3 cannot wait for overnight batch jobs to access corporate data. They require real-time, structured access to transactional data. Data Lakehouses (utilizing formats like Apache Iceberg or Delta Lake) allow the analytical data layer to perform with the speed and reliability of a transactional database.

This means that an agent can execute an SQL query against petabytes of historical customer data securely, receive a structured response in milliseconds, and immediately factor that data into its reasoning loop. The Lakehouse acts as the universal translation layer between the raw storage of Layer 1 and the complex reasoning engines of Layer 3.

Security and Zero-Trust in the Multi-Agent Mesh

Security cannot be bolted onto the top of this stack; it must be interwoven through every layer. We must adopt a Zero-Trust architecture specifically tailored for autonomous agents.

When an agent requests access to a database, the system must not assume trust simply because the agent resides inside the corporate firewall. The Identity and Access Management (IAM) layer must authenticate the specific agent session, verify the cryptographic signature of the prompt that initiated the request, and validate that the requested action falls within the strict budgetary and access boundaries defined in Layer 4’s Governance-as-Code policies.

If any of these checks fail, the request is instantly denied at the network level, severing the connection before the database is ever reached.

The Fallacy of the Unified Platform

As enterprises look to implement this architecture, they will inevitably be pitched massive, unified platforms by single monolithic vendors promising to handle everything from the physical compute all the way up to the generative user interface.

Purchasing a unified platform is a catastrophic strategic error.

The velocity of innovation at every single layer of this stack is simply too high. The company building the best vector database index today will not be the company building the best agentic routing protocol tomorrow. If you lock your enterprise into a single vendor’s proprietary ecosystem, you are tying your execution speed to their slowest internal development team.

Instead, the 2026 Enterprise Stack demands a highly modular, best-of-breed approach. You must architect your system so that you can hot-swap your inference engine, your embedding cache, or your orchestrator without disrupting the rest of the business. You achieve this modularity by strictly adhering to open standards and protocols (like the Model Context Protocol) at the seams between the layers.

The Friction Between the Layers

The most critical, catastrophic failures in enterprise AI do not occur within a single layer; they occur at the seams between them.

If a strategy executive mandates a complex, long-context autonomous agent (Layer 4) without consulting the platform engineering team about the extreme VRAM limitations of KV Caching (Layer 2), the project will fail spectacularly in production due to Out-of-Memory errors or astronomical latency spikes.

If an infrastructure team provisions cheap, unreliable hardware without implementing proper fault-tolerance (Layer 1), the resulting cluster crashes will completely destroy the unit economics of the inference engine (Layer 2).

Building the 2026 Enterprise Stack requires deep interdisciplinary fluency. The Chief AI Officer cannot just understand business models; they must deeply understand the physics of data movement. The AI Engineer cannot just write Python scripts; they must understand how their execution loops impact the corporate P&L statement.

This is the blueprint for the next decade of software architecture. Build it thoughtfully, respecting the constraints of every layer.