· AI Infrastructure · 6 min read

Breaking the Bandwidth Wall: Why AI Clusters are Shifting to Ultra Ethernet

To scale past 100k GPUs, the industry is replacing proprietary InfiniBand with AI-optimized Ultra Ethernet.

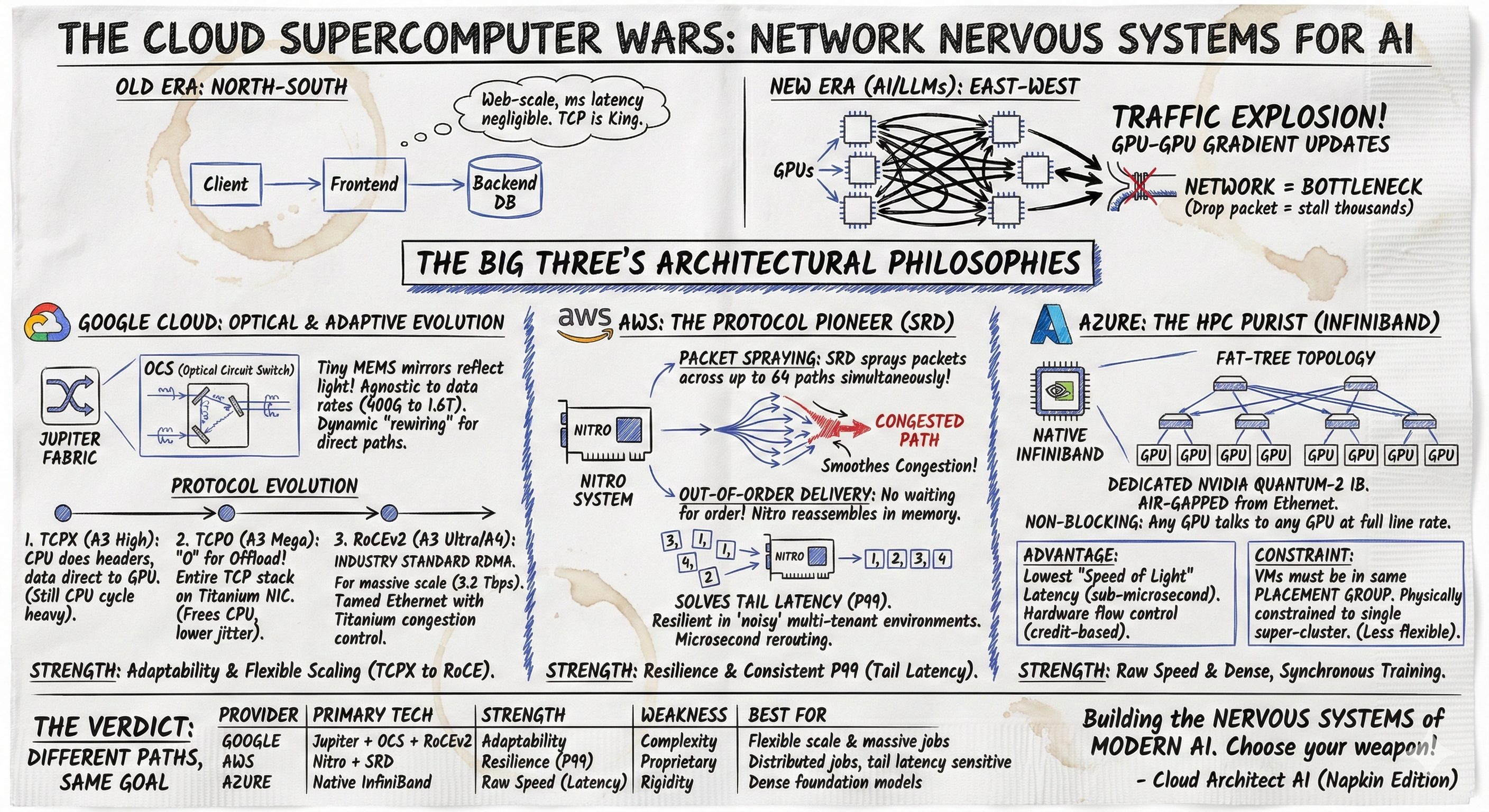

- As AI training clusters push past 100,000 GPUs, the primary bottleneck is no longer compute; it is the inter-chip networking fabric.

- Proprietary networking hardware has historically dominated this space, but closed ecosystems create massive vendor lock-in and limit multi-vendor scaling.

- The Ultra Ethernet Consortium (UEC) and advanced RoCE (RDMA over Converged Ethernet) are standardizing high-speed AI networking, allowing massive clusters to achieve extreme low latency on open protocols.

When you attempt to train a trillion-parameter frontier model, you stop being a software engineer and you become a physicist.

At a certain scale, the code running on the GPU matters significantly less than the speed at which that GPU can talk to the 99,999 other GPUs in the datacenter. If you read my previous breakdown in Visualizing All-Reduce Bandwidth, you understand that distributed training is essentially an exercise in highly synchronized data sharing. The network is the computer.

For the last several years, the AI networking narrative has been entirely dominated by proprietary protocols. They are incredibly fast, highly optimized, and absolutely closed. But as we move into the reality of large scale AI training, where cloud providers are building colossal 100k+ accelerator clusters, the proprietary walls are starting to crack. The industry has hit the bandwidth wall, and the escape hatch is Ultra Ethernet.

The Physics of the All-Reduce

To understand why network fabric matters, you must understand how massive models are trained. We use techniques like 3D Parallelism (Data, Tensor, and Pipeline parallelism). During training, every GPU computes a small piece of the gradient (the mathematical update to the model’s weights).

Before the next step can proceed, every single GPU must share its gradient update with every other GPU. This is the All-Reduce operation.

If you have 100,000 GPUs, the sheer volume of data moving horizontally across the datacenter (East-West traffic) is staggering. If one single network link drops a packet, or if one switch is congested, the entire cluster halts. 100,000 highly expensive accelerators sit entirely idle, waiting for that one delayed packet to arrive. This is known as “tail latency.” In a massive cluster, your training speed is entirely determined by the slowest packet in the network.

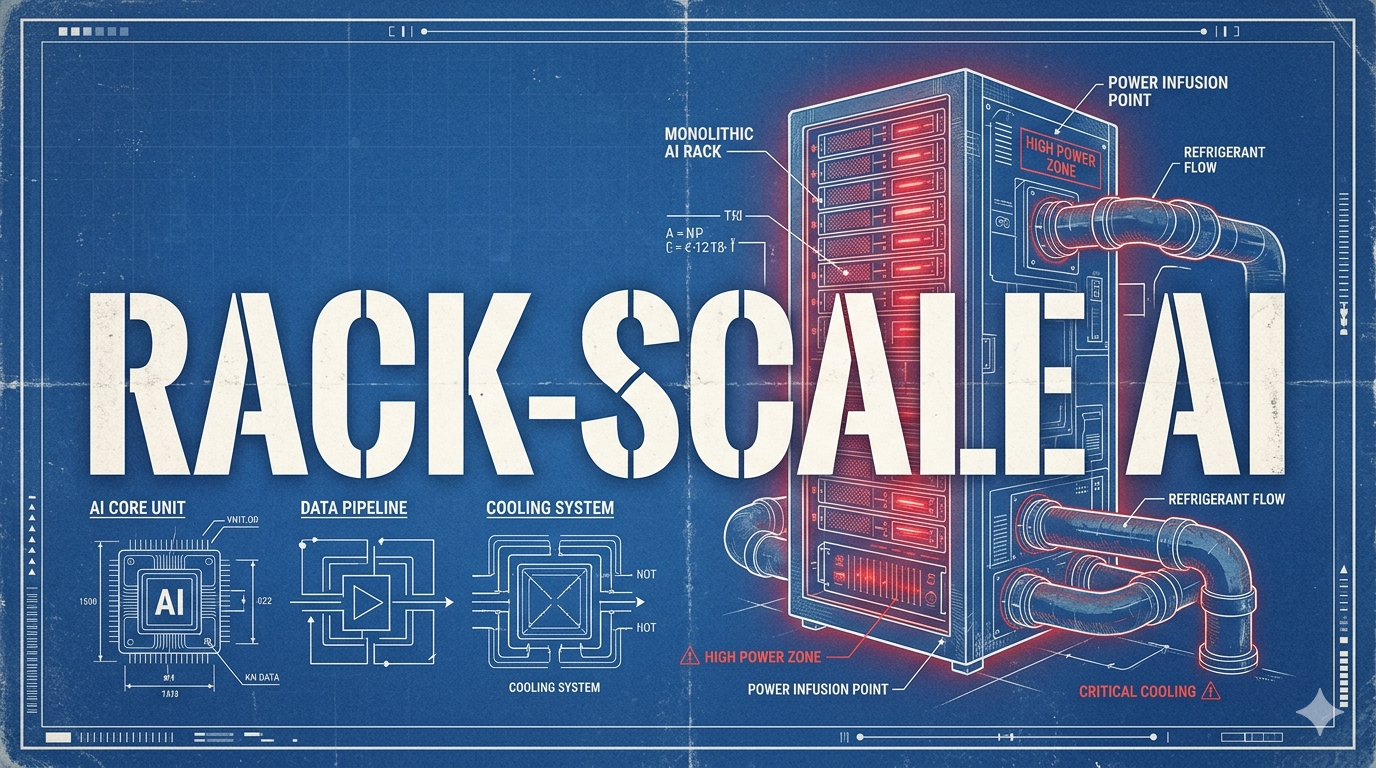

The Problem with Proprietary Fabrics

Proprietary interconnects solve the tail latency problem through brute force and total control. When you buy a massive, vertically integrated server rack, you are not just buying the compute; you are buying the entire proprietary fabric that connects them. It guarantees ultra-low latency and lossless packet delivery, which is strictly required for synchronous operations.

However, this comes with a severe architectural tax. It forces you into a single-vendor ecosystem. If you build your entire datacenter around a closed fabric, you cannot easily drop in a competing accelerator or a custom ASIC. You are locked in.

Furthermore, as Network Design for AI Workloads highlighted, managing these proprietary networks at the hyperscale level requires highly specialized skill sets that do not translate well to the standard IT teams that have spent the last two decades optimizing Ethernet.

When you scale past 100,000 nodes, the constraints of a closed ecosystem become a hard limit on innovation and cost control. The cloud providers recognized this vulnerability, which catalyzed the aggressive acceleration of an open alternative.

The Rise of Ultra Ethernet (UEC)

The assumption used to be that standard Ethernet was simply too “lossy” and unpredictable for the extreme demands of AI training. If a standard TCP/IP packet drops on a web server, the user waits an extra 50 milliseconds. If an update packet drops during a synchronized gradient update across 50,000 accelerators, the entire cluster halts, waits for a timeout, and resyncs. That halt costs tens of thousands of dollars in wasted compute time.

The industry’s answer is the Ultra Ethernet Consortium (UEC). Backed by an enormous coalition of hardware providers, the UEC is fundamentally re-architecting Ethernet specifically for AI workloads.

The core of this revolution is advanced RoCE (RDMA over Converged Ethernet). RDMA (Remote Direct Memory Access) allows one computer to write directly to the memory of another computer without involving the operating system kernel. This bypasses the traditional CPU bottlenecks and drastically slashes latency.

graph TD

subgraph Traditional TCP/IP Stack

A1[Application] --> B1[OS Kernel Space / TCP]

B1 --> C1[Network Interface Card]

C1 --> D1((Switch))

D1 --> C2[Network Interface Card]

C2 --> B2[OS Kernel Space / TCP]

B2 --> A2[Application]

end

subgraph RoCEv2 Stack / Ultra Ethernet

A3[Application Memory] == "Kernel Bypass" ==> C3[RDMA NIC]

C3 == "Lossless Ethernet Transport" ==> D2((Switch))

D2 == "Lossless Ethernet Transport" ==> C4[RDMA NIC]

C4 == "Kernel Bypass" ==> A4[Application Memory]

end

style Traditional TCP/IP Stack fill:#fce4ec,stroke:#d81b60,stroke-width:2px

style RoCEv2 Stack / Ultra Ethernet fill:#e8f5e9,stroke:#2e7d32,stroke-width:2pxBut UEC goes further. It introduces specialized congestion control algorithms, packet spraying (distributing traffic across multiple paths simultaneously to avoid micro-burst bottlenecks), and enhanced link-level reliability. It takes the ubiquitous, cheap, and universally understood Ethernet physical layer and upgrades its brain to handle the chaotic, bursty physics of AI traffic.

Scalable Optical Switching: A Specific Architecture

To see what this looks like in practice, we can look at how hyperscalers deploy massive AI fabrics. While some rely entirely on standard electrical switching, others deploy specialized optical switches.

For instance, certain cloud providers utilize proprietary optical circuit-switched networks. This approach proves that you do not need a closed electrical ecosystem to achieve world-class AI networking. These architectures use standard optical interconnects and highly tuned networking stacks to deliver massive throughput.

When you provision a massive cluster, the underlying optical fabric dynamically reconfigures its mirrors to create direct, dedicated light pathways between the racks that need to communicate most heavily. This effectively creates an ad-hoc, zero-latency physical connection on demand.

This is the power of decoupling the compute from the network fabric. By relying on open standards (like advanced Ethernet) or highly flexible optical fabrics, cloud providers can seamlessly integrate new generations of silicon—from various vendors—without having to rip and replace the entire datacenter wiring.

Escaping the Bandwidth Wall

The shift to Ultra Ethernet is the most important infrastructure story of modern AI. It represents the maturation of the AI hardware stack.

Early markets are always dominated by monolithic, vertically integrated solutions because they are the easiest way to guarantee performance when the technology is new. But as the market matures and scales to industrial proportions, open standards inevitably take over. They drive down costs, increase supply chain resilience, and enable massive, multi-vendor architectures.

The software ecosystem is also rapidly adapting. Communication libraries (like NCCL or RCCL) are being heavily optimized to leverage these new Ethernet backbones efficiently. This ensures that the upper-layer frameworks (like PyTorch or JAX) do not need to change; the massive speedup happens invisibly at the transport layer.

If you are architecting a cluster today, you must assume that the future of the datacenter is Ethernet. The bandwidth wall is real, but we are not going to break through it by buying more proprietary cables. We are going to break through it by upgrading the protocol that already connects the world. The era of the single-vendor AI supercomputer is ending; the era of the open, interoperable AI datacenter has begun.