· AI Infrastructure · 6 min read

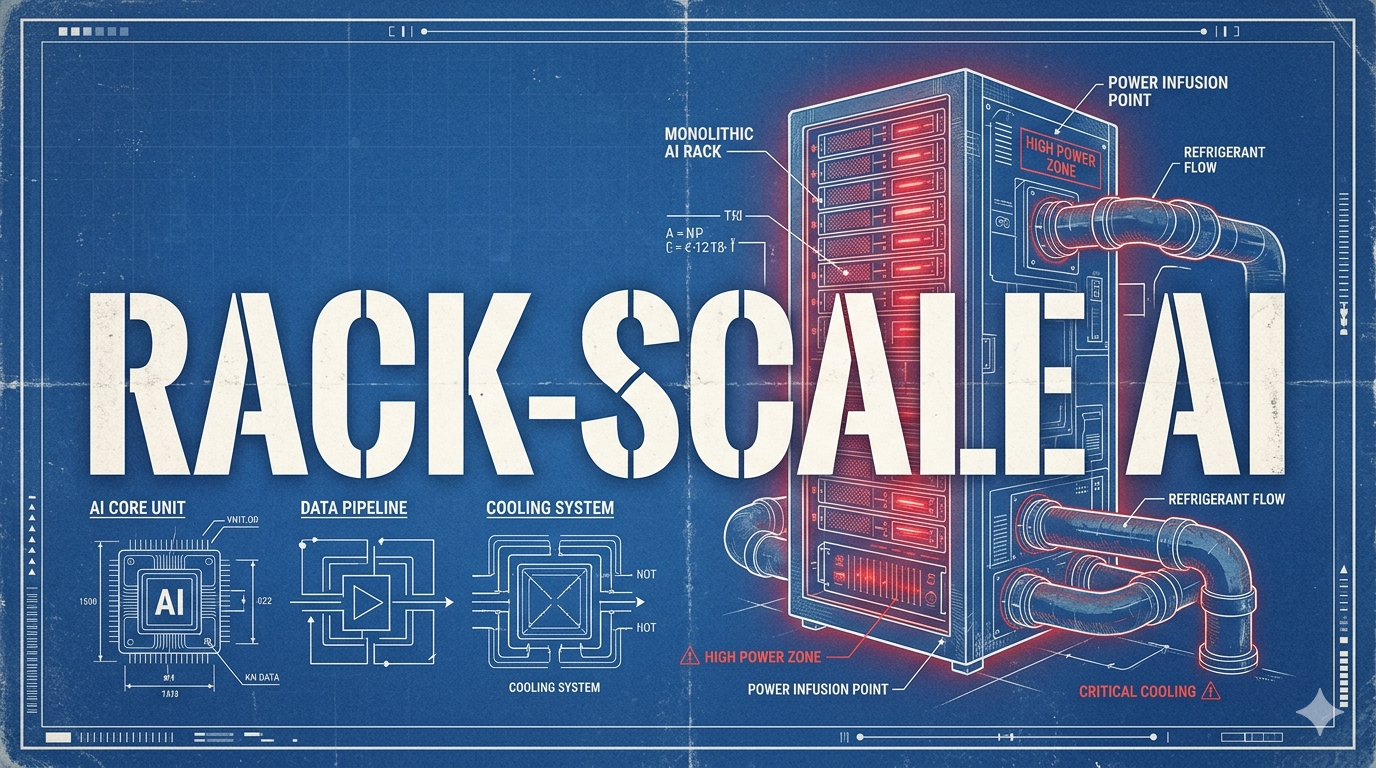

Rack-Scale AI Design: The End of Component Scaling

We have hit the physical limits of what a single chip can do. The new unit of compute for AI infrastructure isn't the GPU; it's the fully integrated rack.

- Comparing individual GPU or TPU specifications is no longer a useful metric for evaluating AI infrastructure performance.

- We have hit the physical limitations of single-chip scaling due to power density and inter-chip bandwidth constraints.

- The new atomic unit of compute is the fully integrated rack, where networking, cooling, and compute are co-designed as a single system.

- When provisioning clusters, teams must architect for rack-scale topologies to avoid catastrophic network bottlenecks.

For years, the hardware conversation in AI was incredibly simple. We all just looked at the specs of a single chip. We compared the FLOPS. We looked at the memory bandwidth. We celebrated every time a new piece of silicon dropped that was 2x faster than the last one. It was a comfortable, predictable cycle of component scaling.

That era is over. It is dead.

If you are trying to build large-scale inference or training infrastructure today, and you are making decisions based on the performance of a single accelerator, you are architecting for failure. We have fundamentally broken the model of component scaling. The physics simply do not allow it anymore. The new baseline, the new atomic unit of compute in the data center, is the rack.

Let me explain exactly why this happened, what it means for your infrastructure, and how you need to change your approach.

The Physics of the Wall

Why did component scaling die? It died because we hit three massive physical walls almost simultaneously: Power, Cooling, and Bandwidth.

Let’s start with power. A modern, high-end accelerator pulls an absurd amount of electricity. We are no longer talking about a few hundred watts. We are pushing well past a kilowatt for a single chip. When you pack eight of these into a standard 4U server chassis, the power draw is staggering. Traditional data center power delivery systems were never designed to handle 40kW to 100kW per rack. They melt.

Then comes the cooling. You cannot air-cool these systems anymore. The power density is too high. If you try to blow cold air across a chassis pulling 80kW, you just end up with a very loud, very expensive space heater. This is why liquid cooling (direct-to-chip or immersion) is no longer a niche experiment. It is a mandatory operational requirement for site selection.

But the biggest wall is bandwidth.

As models push past trillions of parameters, a single chip cannot hold the weights. The model must be sharded across multiple chips using tensor parallelism. For inference to happen at acceptable speeds, these chips must communicate with each other constantly. They need to share massive matrices of intermediate activations during every single step of the decode phase.

This requires an inter-chip interconnect with mind-boggling bandwidth. We are talking terabytes per second. Inside a single server chassis, we solve this with specialized high-speed fabrics (like NVLink or specific internal TPU interconnects).

But what happens when your model needs 64 chips? Or 256? You have to leave the chassis. And the moment you leave the chassis, you hit the network.

The Network is the Computer (Again)

When you leave the server chassis, your massive, terabyte-per-second internal fabric suddenly bottlenecks down into your standard Ethernet or Infiniband network. It is the equivalent of taking a twelve-lane superhighway and forcing all the traffic onto a dirt road.

This is the exact point where single-chip metrics become meaningless. It does not matter if your specific GPU can do a trillion FLOPS if it spends 80% of its time sitting idle, waiting for a packet to arrive from another node over a congested network switch.

This is why the rack is the new atomic unit.

In a rack-scale design, the entire rack is engineered as a single, massive computer. The interconnect fabric doesn’t stop at the server chassis. The backplane of the rack itself acts as a massive switch, allowing all 64 or 72 accelerators in the rack to communicate with each other with near-uniform latency and bandwidth.

When you deploy a Cloud TPU v7 pod on GCP, you aren’t just renting a bunch of isolated chips. You are renting a pre-configured, optically-connected topology. Google has co-designed the silicon, the optical switches, and the cooling infrastructure to ensure that the network does not become the bottleneck until you scale out to thousands of chips.

Architecting for the Rack

So what does this mean for you, the practitioner? It means you have to change how you provision and deploy your clusters.

1. Stop Thinking in Nodes

When you configure your GKE clusters for AI workloads, you can no longer just ask for “100 nodes.” You need to understand the physical topology of those nodes. Are they in the same rack? Are they connected to the same top-of-rack switch?

If your Kubernetes scheduler randomly places your tensor-parallel inference pods across different racks in a zone, your performance will tank. You will introduce massive tail latency as the nodes struggle to communicate across the spine switches of the data center.

You must use topology-aware scheduling. You need to explicitly tell GKE to bin-pack your pods into the tightest physical configuration possible.

2. The Economics of Scale

Rack-scale design fundamentally changes the economics of AI infrastructure. Because the interconnect and cooling are so tightly integrated, it is often more cost-effective to rent a full, dedicated rack topology than to try and piecemeal a cluster together using spot instances scattered across a region.

This is especially true for large-scale inference. The consistency of a rack-scale interconnect provides deterministic latency. And as we discussed previously, deterministic latency is critical for the user experience. You are paying a premium for the network architecture, not just the compute.

3. Bare Metal Realities

As you push the limits of performance, virtualization becomes a liability. The hypervisor adds overhead. The virtualized network interfaces add jitter.

For the most demanding workloads, we are seeing a strong shift back towards bare-metal deployments. Teams are dropping the shared cloud model entirely. They want direct, unmediated access to the PCIe lanes and the network interface cards (NICs). They want to write custom Pallas kernels that address hardware memory directly without an abstraction layer getting in the way.

This is the reality of the 2026 infrastructure landscape.

The era of easy component upgrades is behind us. We are now in the business of distributed systems engineering. The performance of your AI application is no longer determined by the specs of the chip you choose. It is determined by how efficiently you can move data across a massive, integrated rack of compute. If you don’t design for the network, the network will destroy your performance.

Embrace the rack. It is the only way forward.