Breaking the Bandwidth Wall: Why AI Clusters are Shifting to Ultra Ethernet

To scale past 100k GPUs, the industry is replacing proprietary InfiniBand with AI-optimized Ultra Ethernet.

To scale past 100k GPUs, the industry is replacing proprietary InfiniBand with AI-optimized Ultra Ethernet.

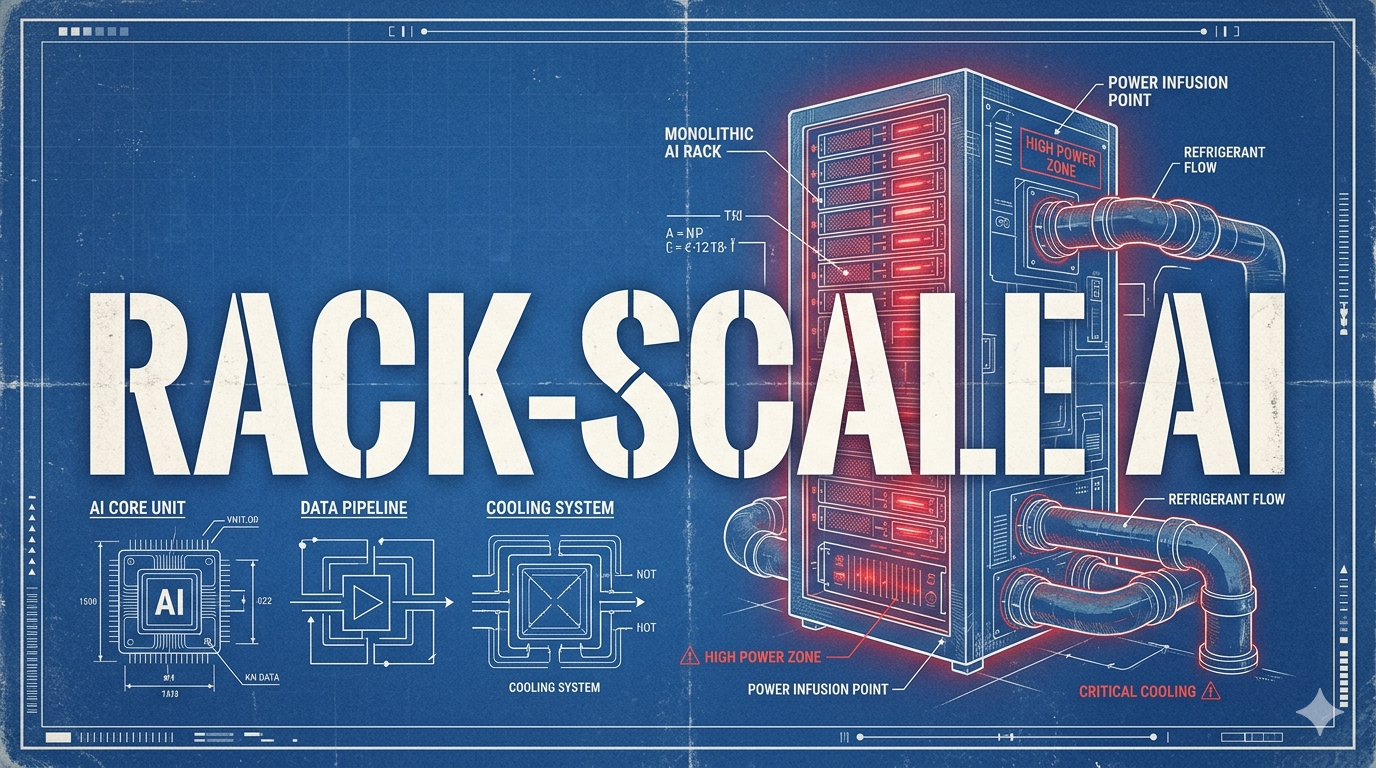

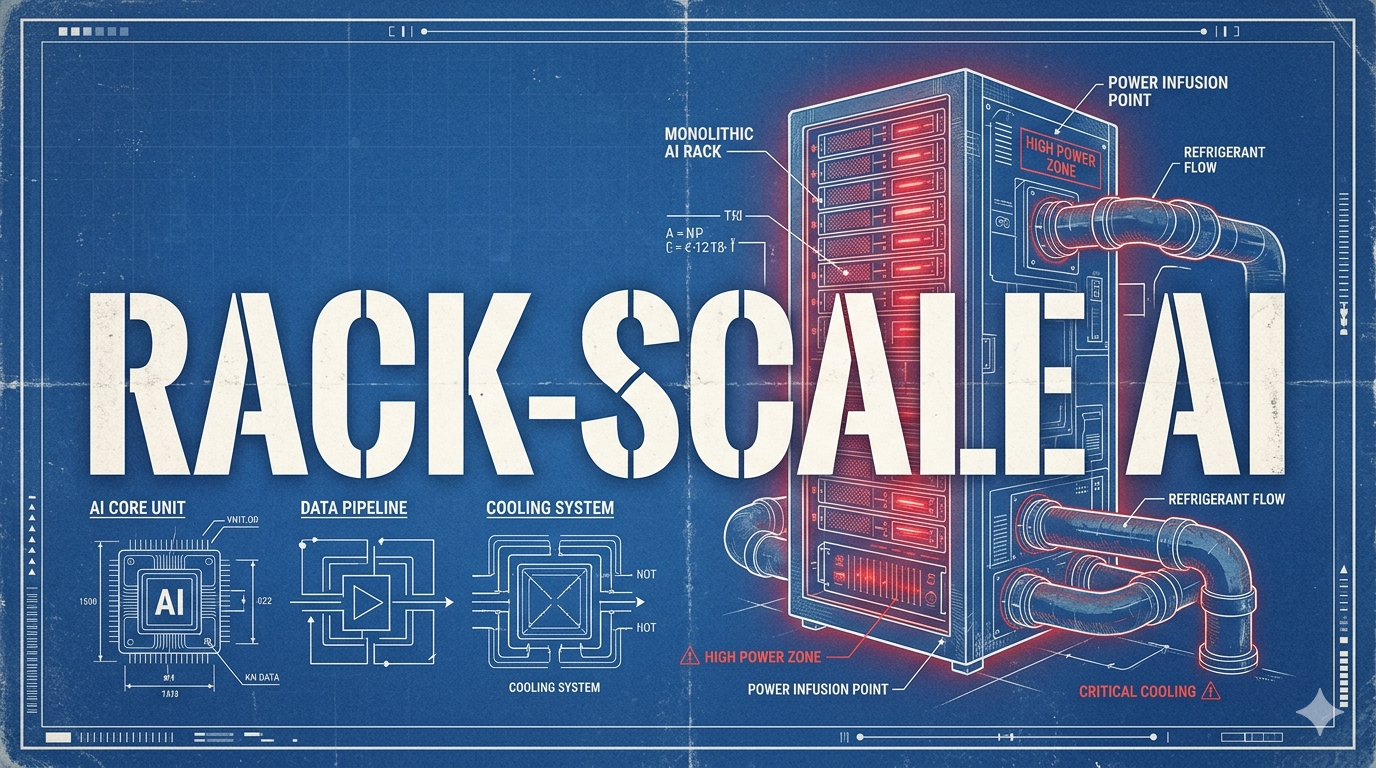

We have hit the physical limits of what a single chip can do. The new unit of compute for AI infrastructure isn't the GPU; it's the fully integrated rack.

The competitive advantage in AI has shifted from raw GPU volume to architectural efficiency, as the "Memory Wall" proves traditional frameworks waste runtime on "data plumbing." This article explains how the compiler-first JAX AI Stack and its "Automated Megakernels" are solving this scaling crisis and enabling breakthroughs for companies like xAI and Character.ai.