Breaking the Bandwidth Wall: Why AI Clusters are Shifting to Ultra Ethernet

To scale past 100k GPUs, the industry is replacing proprietary InfiniBand with AI-optimized Ultra Ethernet.

To scale past 100k GPUs, the industry is replacing proprietary InfiniBand with AI-optimized Ultra Ethernet.

We have hit the physical limits of what a single chip can do. The new unit of compute for AI infrastructure isn't the GPU; it's the fully integrated rack.

When your model doesn't fit on one GPU, you're no longer just learning coding-you're learning physics. We dive deep into the primitives of NCCL, distributed collectives, and why the interconnect is the computer.

In distributed training, the slowest packet determines the speed of the cluster. We benchmark GCP's 'Circuit Switched' Jupiter fabric against AWS's 'Multipath' SRD protocol.

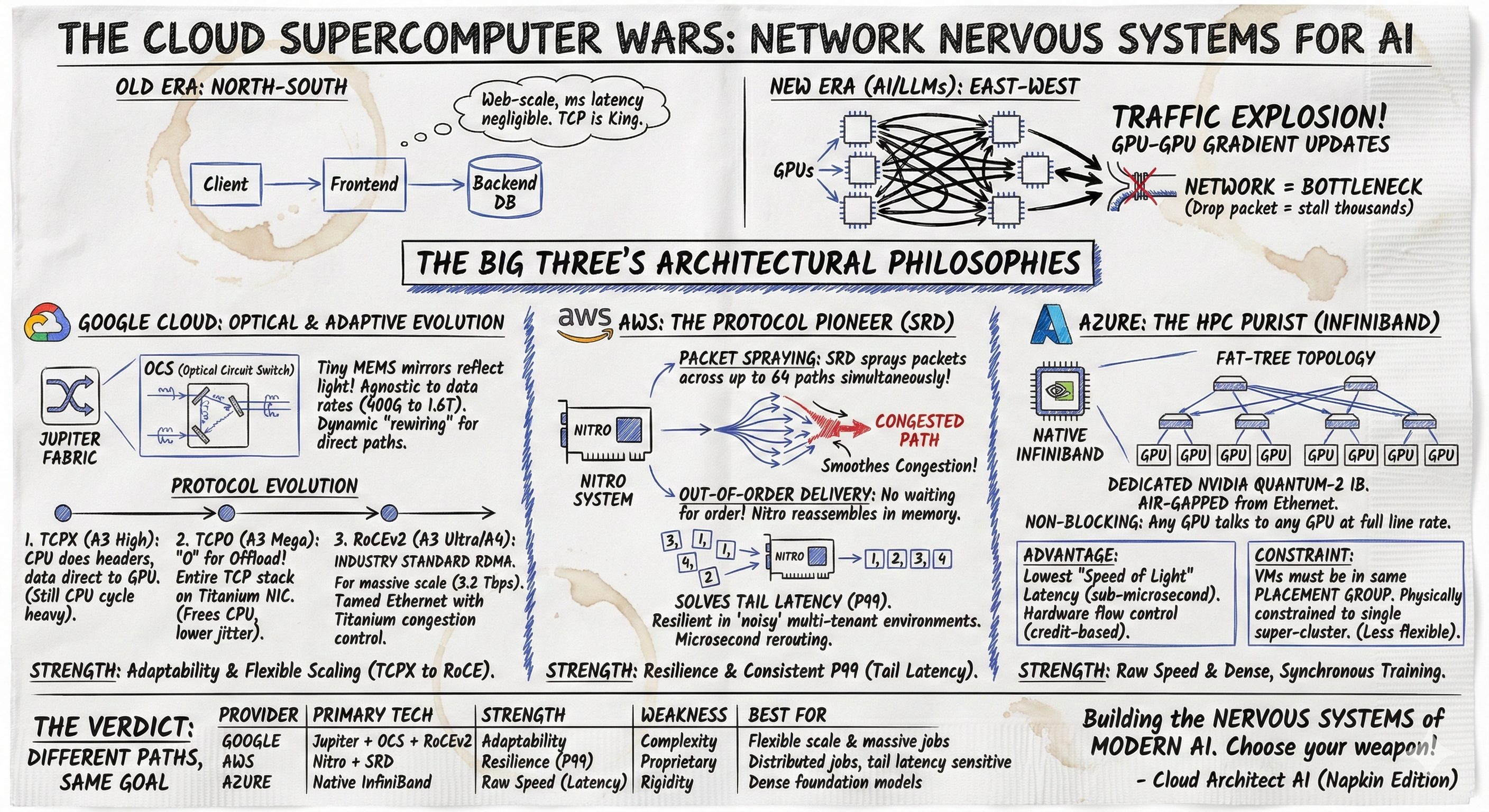

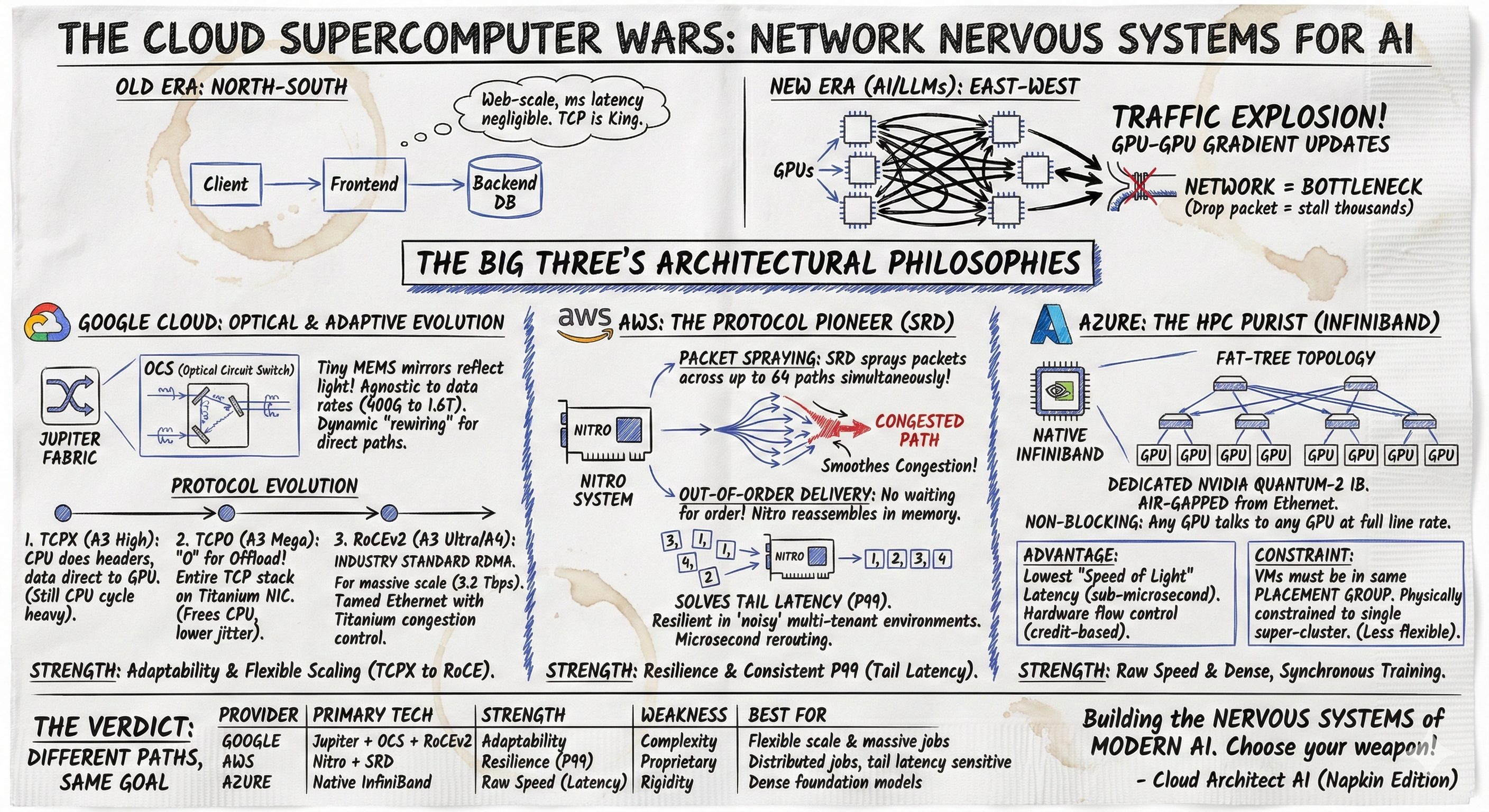

Generative AI has shifted data center traffic patterns, making network performance the new bottleneck for model training. This post contrasts how the "Big Three" cloud providers utilize distinct architectures to solve this challenge. We examine Google Cloud’s evolution from proprietary TCPX to standard RoCEv2 on its optical Jupiter fabric, AWS’s innovation of the Scalable Reliable Datagram (SRD) protocol to mitigate Ethernet congestion, and Azure’s adoption of native InfiniBand.