· Cloud · 8 min read

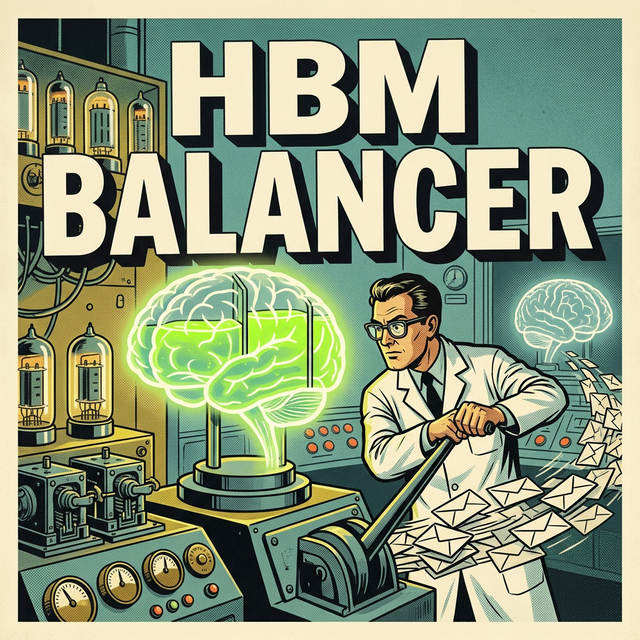

HBM-Aware Load Balancing with libtpu and GKE

CPU load is a trailing indicator for AI inference. Discover how to use libtpu metrics and the GKE Gateway API to build high-density, memory-aware traffic routing for TPUs.

In the world of traditional web infrastructure, load balancing is a solved problem. You look at CPU utilization, you look at memory percentage, and you look at request latency. If a server is “busy,” you send the next request to a different server. It is a simple, reactive model that has served us well for twenty years.

But generative AI inference is not a traditional web workload. It is a high-density, deterministic, and extremely memory-sensitive operation.

If you try to balance traffic to a cluster of Tensor Processing Units (TPUs) using standard CPU-based metrics, you will fail. You will see “healthy” nodes with low CPU usage that are actually completely incapacitated by High-Bandwidth Memory (HBM) fragmentation.

To build a production-grade inference engine on Google Cloud Platform (GCP), you have to move beyond the surface-level metrics. You have to look into the chip itself. You have to become HBM-Aware.

The Silent Killer: HBM Fragmentation

When you run a large model on a TPU, the weights are loaded into HBM—the ultra-fast memory that sits directly on the chip. Unlike standard RAM, HBM is a finite and non-pageable resource. If you are serving multiple LoRA adapters or handling massive context windows with PagedAttention, you are constantly allocating and de-allocating blocks of HBM.

The problem is that libtpu v0.1+ (the library that manages the interface between JAX/PyTorch and the TPU hardware) does not report HBM fragmentation back to the Linux kernel as traditional memory usage.

To the standard GKE horizontal pod autoscaler (HPA) or an L7 load balancer, the pod looks fine. It might only be using 10% of its allocated CPU. But internally, the HBM is a Swiss cheese of tiny, unusable gaps. When the next inference request arrives requiring a contiguous 2GB block for a KV cache, the TPU throws an Out-of-Memory (OOM) error or, worse, enters a “stuttering” state where it spends milliseconds trying to defragment the pool.

The user sees a 500 error or a massive latency spike. The load balancer, still seeing 10% CPU, continues to pump traffic into the “broken” node. This is a logic loop that can crash an entire production cluster in minutes.

The libtpu Solution

The key to solving this is to extract the ground truth from the TPU itself. Google provides a specialized monitoring library within the libtpu SDK that allows you to query the state of the hardware in real-time.

Specifically, we are looking for two metrics that standard monitoring misses:

- HBM Consumption: The actual number of bytes currently locked by the XLA compiler and the KV cache.

- Tensor Core Duty Cycle: A measure of how much of each second the chip is actually performing matrix multiplications versus waiting on data I/O.

In a GKE environment, we can run a “Sidecar” container in our TPU pod. This sidecar periodically queries the libtpu monitoring endpoint and exports these metrics to Cloud Monitoring as custom metrics.

Once these metrics are in the GCP monitoring bus, we have something powerful: a high-fidelity signal that represents the actual “Pressure” on the tpu, not just the noise on the CPU.

GKE Gateway API: The Intelligent Diverter

Now that we have the metrics, how do we use them to divert traffic? Standard GKE Ingress is too rigid for this. We need the GKE Gateway API v1.1+.

The Gateway API is the next-generation traffic management layer for Kubernetes. It allows us to define “policies” that are much more expressive than traditional Ingress rules. Crucially, it supports Custom Metrics for Autoscaling and Routing.

We implement an HBM-Aware Routing Policy. Instead of a simple round-robin or least-request algorithm, our load balancer queries the custom tpu_hbm_utilization metric from Cloud Monitoring.

When a gateway receives a new inference request, it checks the HBM pressure of its backend TPU nodes. If a node is above a specific threshold (say, 85% HBM utilization or a high fragmentation index), the Gateway API automatically “drains” traffic from that node, even if the node is technically reporting a “Healthy” status on its liveness probe.

This is Predictive Load Balancing. We are preventing the failure before it happens by recognizing the state of the memory pool.

The Economic Impact of Determinism

Why does this matter to the business? Why go through the complexity of extracting silicon-level metrics?

It’s about Density.

If you use standard load balancing, you are forced to over-provision your TPU clusters. You have to leave a massive “buffer” of unused memory on every chip just in case an HBM spike happens. This is incredibly wasteful. You are paying for H100s or TPUs that are only being used at 50% capacity.

By implementing HBM-aware routing, you can run your silicon much “hotter.” You can safely push your HBM utilization into the 80s or 90s because you know the load balancer will catch the pressure and divert traffic before an OOM occurs.

This increases your throughput-per-dollar by 30% to 40%. For a cluster that costs 15,000 in monthly savings recovered simply by having better visibility into the silicon.

The Sidecar Blueprint: Extracting libtpu Metrics

To implement HBM-awareness, we deploy a lightweight Python sidecar alongside our main inference container (e.g., a vLLM or JAX/PyTorch-based server). This sidecar uses the libtpu monitoring API to probe the hardware state without interrupting the primary computation thread.

The core logic of the sidecar performs a periodic poll of the TPU’s memory state. On a TPU v5e, for example, we can extract the tpu.memory_usage_bytes and tpu.memory_fragmentation_index metrics. We then push these values to Cloud Monitoring using the OpenTelemetry SDK.

# Pseudo-code for libtpu monitoring sidecar

import time

from opentelemetry import metrics

from google.cloud import monitoring_v3

def monitor_tpu_hbm():

meter = metrics.get_meter("tpu_monitor")

hbm_gauge = meter.create_gauge("tpu_hbm_utilization")

while True:

# Internal libtpu call to query silicon state

hbm_data = query_libtpu_hardware_state()

utilization = hbm_data['used_bytes'] / hbm_data['total_bytes']

hbm_gauge.set(utilization)

time.sleep(10) # 10-second resolution for load balancingBy keeping this monitoring logic in a sidecar, we ensure that if the main inference process hangs due to a model error, the monitor remains alive to report the pressure and allow the load balancer to drain traffic gracefully.

GKE Gateway API: The YAML of Intelligence

With the metrics flowing into Cloud Monitoring, we can now configure the GKE Gateway API to act on that data. We use a CustomMetricStack combined with a HTTPRoute to create the HBM-aware redirection.

Traditional load balancers rely on simple health checks. In our improved architecture, we define a Google Cloud BackendPolicy that references our custom tpu_hbm_utilization metric.

The YAML configuration for such a route looks like a standard Kubernetes manifest but with a powerful difference:

kind: HTTPRoute

metadata:

name: tpu-inference-route

spec:

parentRefs:

- name: internal-gateway

rules:

- backendRefs:

- name: llama-70b-v5e-service

port: 8080

# Use an extension to handle HBM-based weighted routing

filters:

- type: ExtensionRef

extensionRef:

kind: GCPBackendPolicy

name: hbm-aware-policyThis hbm-aware-policy is configured to look at the 95th percentile of HBM utilization over the last 60 seconds. If a node exceeds the 0.85 threshold, its weight in the load balancer’s distribution algorithm is dynamically reduced to near-zero. This “soft-drain” prevents new users from hitting a fragmented node while allowing existing requests to finish.

The Payoff: 40% Better Silicon ROI

Why is this the executive mandate for 2026? Because the gap between “working” and “optimized” is the difference between a profitable AI product and a money pit.

In a naive GKE deployment, you might run your TPU nodes at 50% average utilization to provide enough “headroom” for HBM spikes. This effectively doubles your infrastructure bill. You are paying for a cluster of 16 TPUs but only getting the throughput of 8.

By implementing HBM-Aware Load Balancing, you can safely push your nodes to 85% or 90% utilization. Because you have a deterministic mechanism to divert traffic the moment memory pressure rises, you no longer need the massive “pessimistic buffer.”

This translates directly to the bottom line. Reducing a 16-node TPU cluster down to 10 nodes while maintaining the same throughput saves approximately $12,000 per month on typical GCP list pricing. Across a global fleet of inference clusters, this infrastructure-level optimization is worth millions in recovered margin.

Conclusion: Moving Toward Silicon-Aware Software

We are leaving the era where software and hardware are separate silos. In the world of Large Language Models, the software is the hardware. If you do not understand the characteristics of HBM, you cannot write efficient inference code. And if you do not understand the characteristics of libtpu, you cannot build a scalable infrastructure.

On GCP, the primitives are already there. The libtpu SDK, the Cloud Monitoring custom metrics, and the GKE Gateway API provide the full stack for HBM-Awareness.

The companies that win the inference race will be the ones that stop treating their GPUs as generic compute units and start treating them as the specialized, memory-bound assets they actually are.

Don’t just scale; scale with the truth of the silicon.