Demystifying Google TPU SparseCore: Accelerating Recommendation Systems

How Google TPU SparseCore solves embedding lookup bottlenecks in recommender models. Learn the co-designed architecture of Trillium's SparseCores.

How Google TPU SparseCore solves embedding lookup bottlenecks in recommender models. Learn the co-designed architecture of Trillium's SparseCores.

CPU load is a trailing indicator for AI inference. Discover how to use libtpu metrics and the GKE Gateway API to build high-density, memory-aware traffic routing for TPUs.

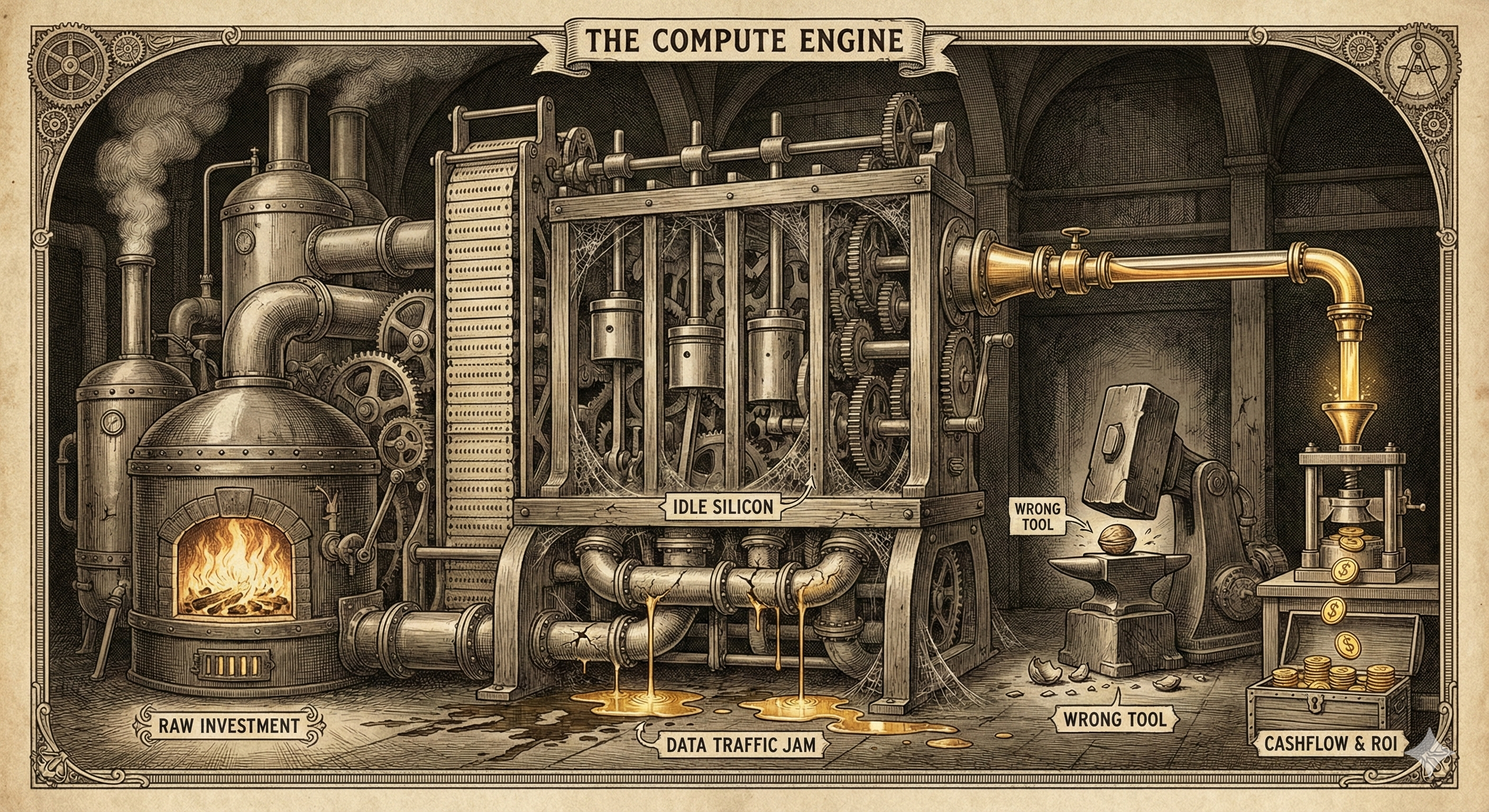

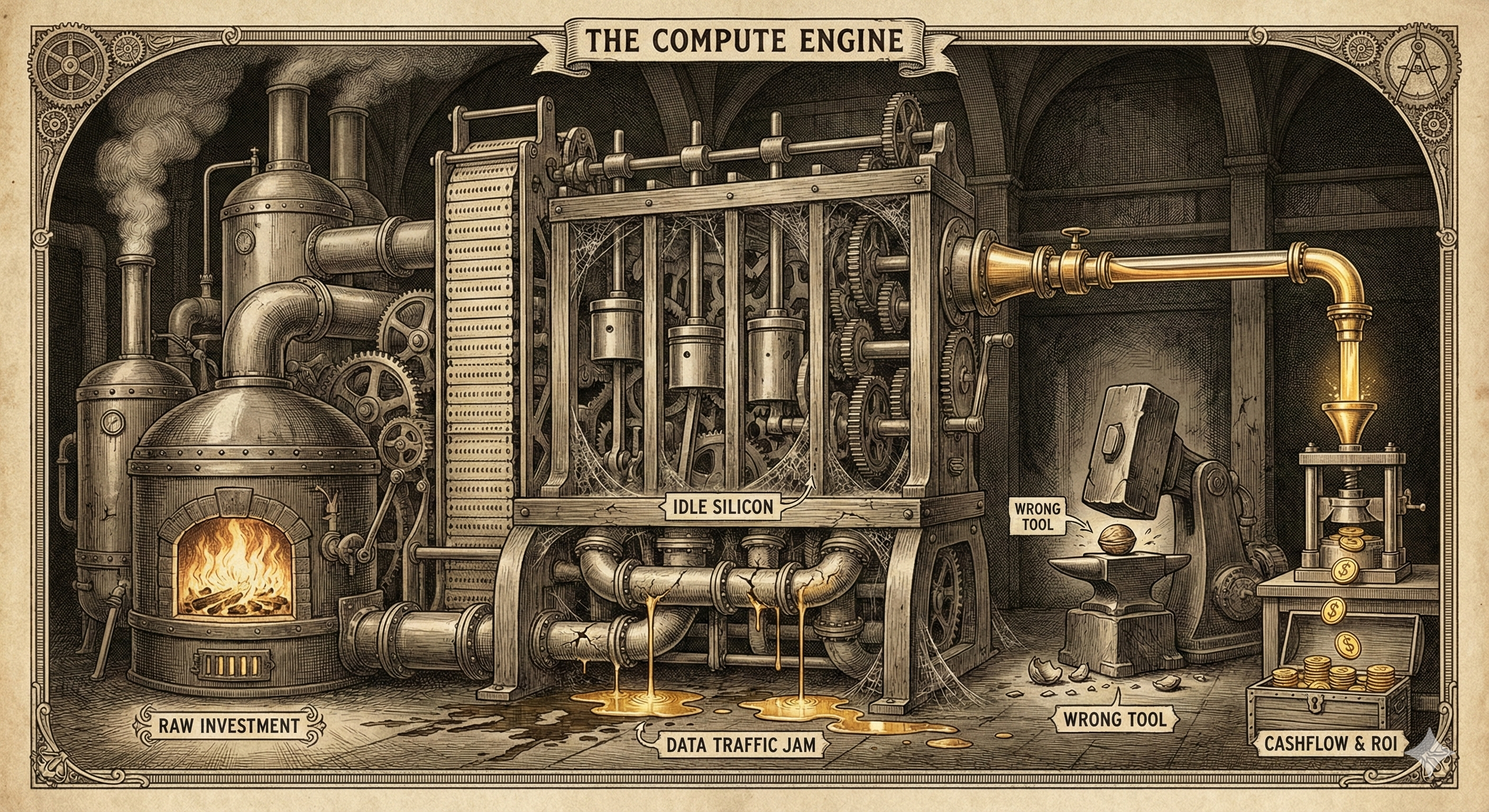

The AI industry is shifting from celebrating large compute budgets to hunting for efficiency. Your competitive advantage is no longer your GPU count, but your cost-per-inference.

Demystifying hardware acceleration and the competing sparsity philosophies of Google TPUs and Nvidia. This post connects novel architectures, like Mixture-of-Experts, to hardware design strategy and its impact on performance, cost, and developer ecosystem trade-offs.

This post contrasts the switching technologies of NVIDIA and Google's TPUs. Understanding their different approaches is key to matching modern AI workloads, which demand heavy data movement, to the optimal hardware.

It's not just about specs. This post breaks down the core trade-off between the GPU's versatile power and the TPU's hyper-efficient, specialized design for AI workloads.