Single-Batch Inference: Speculative Decoding on an A100

See how speculative decoding performs for single-batch requests on an NVIDIA A100. We analyze acceptance rates, latency, and the mechanics of the draft model gamble.

See how speculative decoding performs for single-batch requests on an NVIDIA A100. We analyze acceptance rates, latency, and the mechanics of the draft model gamble.

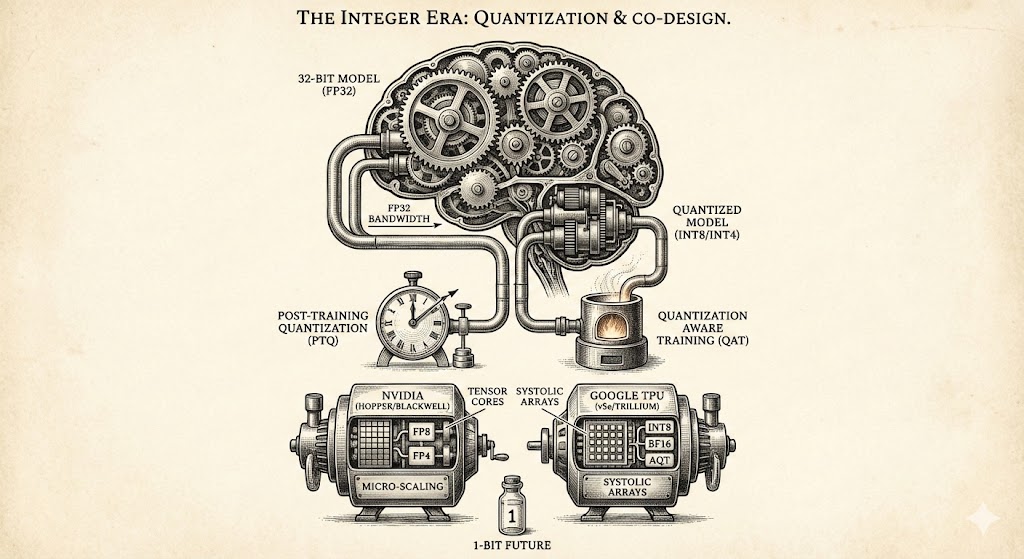

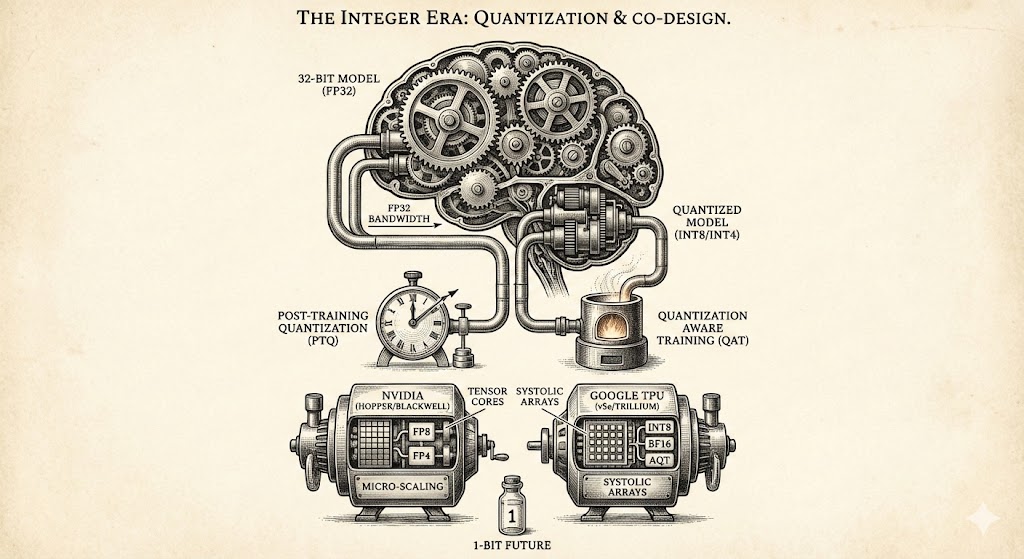

Explore how quantization and hardware co-design overcome memory bottlenecks, comparing NVIDIA and Google architectures while looking toward the 1-bit future of efficient AI model development.