Benchmarking FP8 Stability: Where Gradients Go to Die

FP8 is the new frontier for training efficiency, but it breaks in the most sensitive layers. We dissect the E4M3/E5M2 split and how to spot divergence.

FP8 is the new frontier for training efficiency, but it breaks in the most sensitive layers. We dissect the E4M3/E5M2 split and how to spot divergence.

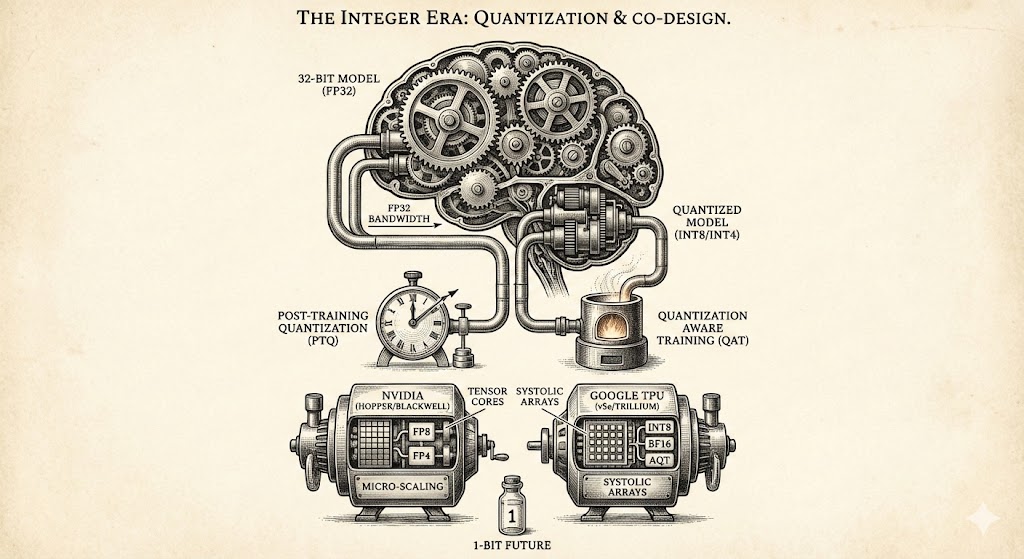

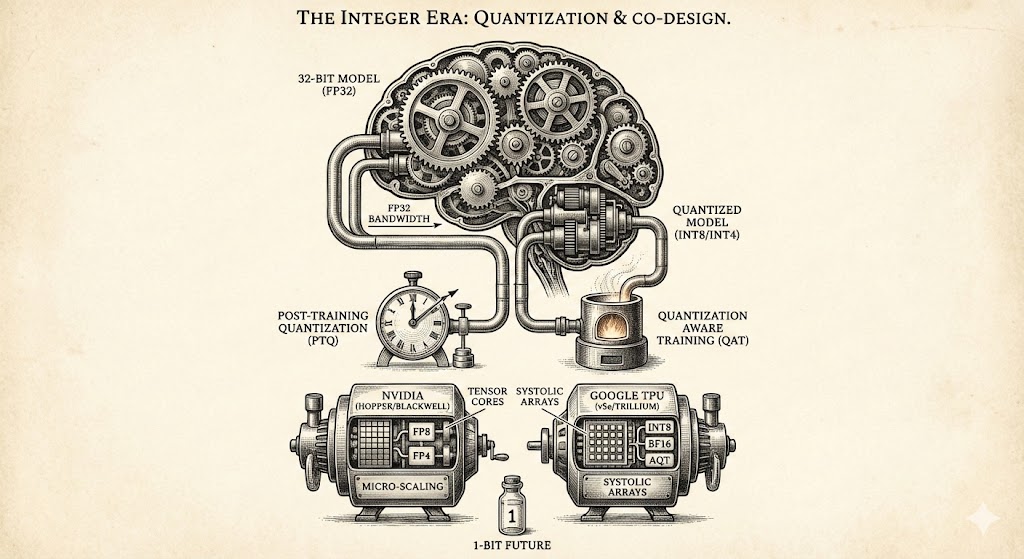

Explore how quantization and hardware co-design overcome memory bottlenecks, comparing NVIDIA and Google architectures while looking toward the 1-bit future of efficient AI model development.

Nvidia Blackwell microscaling and the new FP4 formats double inference speeds. Dive into how the second-generation Transformer Engine uses scale factors and sparsity for AI workloads.