KV Cache Quantization: Fitting Larger Context Windows on Single GPUs

The bottleneck for long-context agents is memory, not compute. Learn how to implement FP8 or INT8 KV caching to double your context length and survive inference at scale.

The bottleneck for long-context agents is memory, not compute. Learn how to implement FP8 or INT8 KV caching to double your context length and survive inference at scale.

When aggressive INT8 quantization goes horribly rogue because of unrepresentative calibration data, and precisely how the blind pursuit of hyper efficiency can utterly destroy the end user experience.

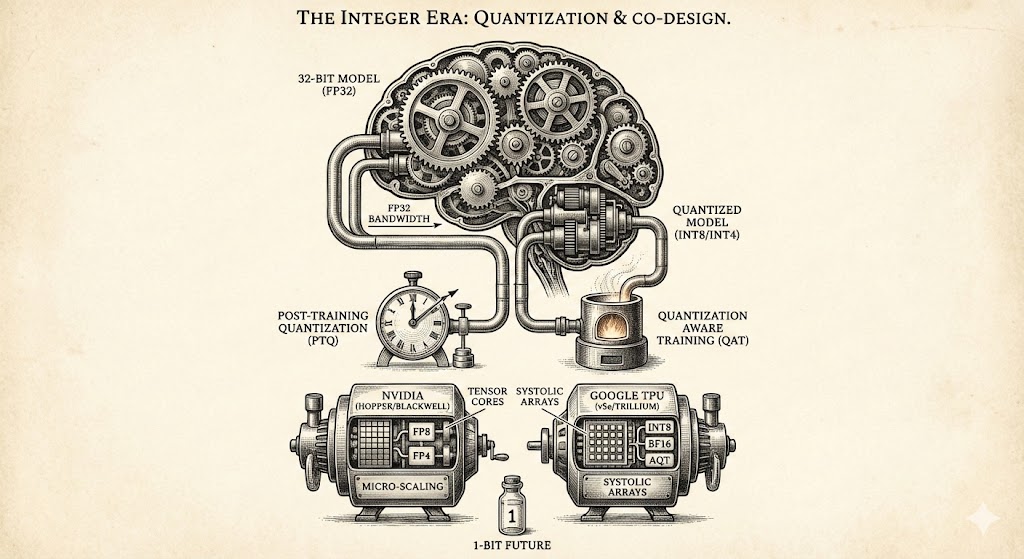

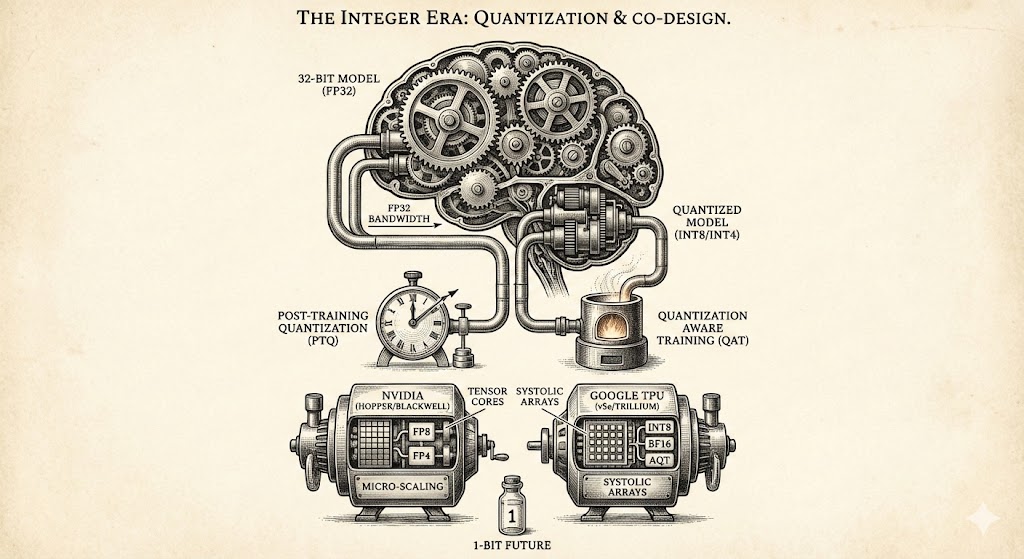

Explore how quantization and hardware co-design overcome memory bottlenecks, comparing NVIDIA and Google architectures while looking toward the 1-bit future of efficient AI model development.