Compiling TensorRT Engines: The Calibration Trap

When aggressive INT8 quantization goes horribly rogue because of unrepresentative calibration data, and precisely how the blind pursuit of hyper efficiency can utterly destroy the end user experience.

When aggressive INT8 quantization goes horribly rogue because of unrepresentative calibration data, and precisely how the blind pursuit of hyper efficiency can utterly destroy the end user experience.

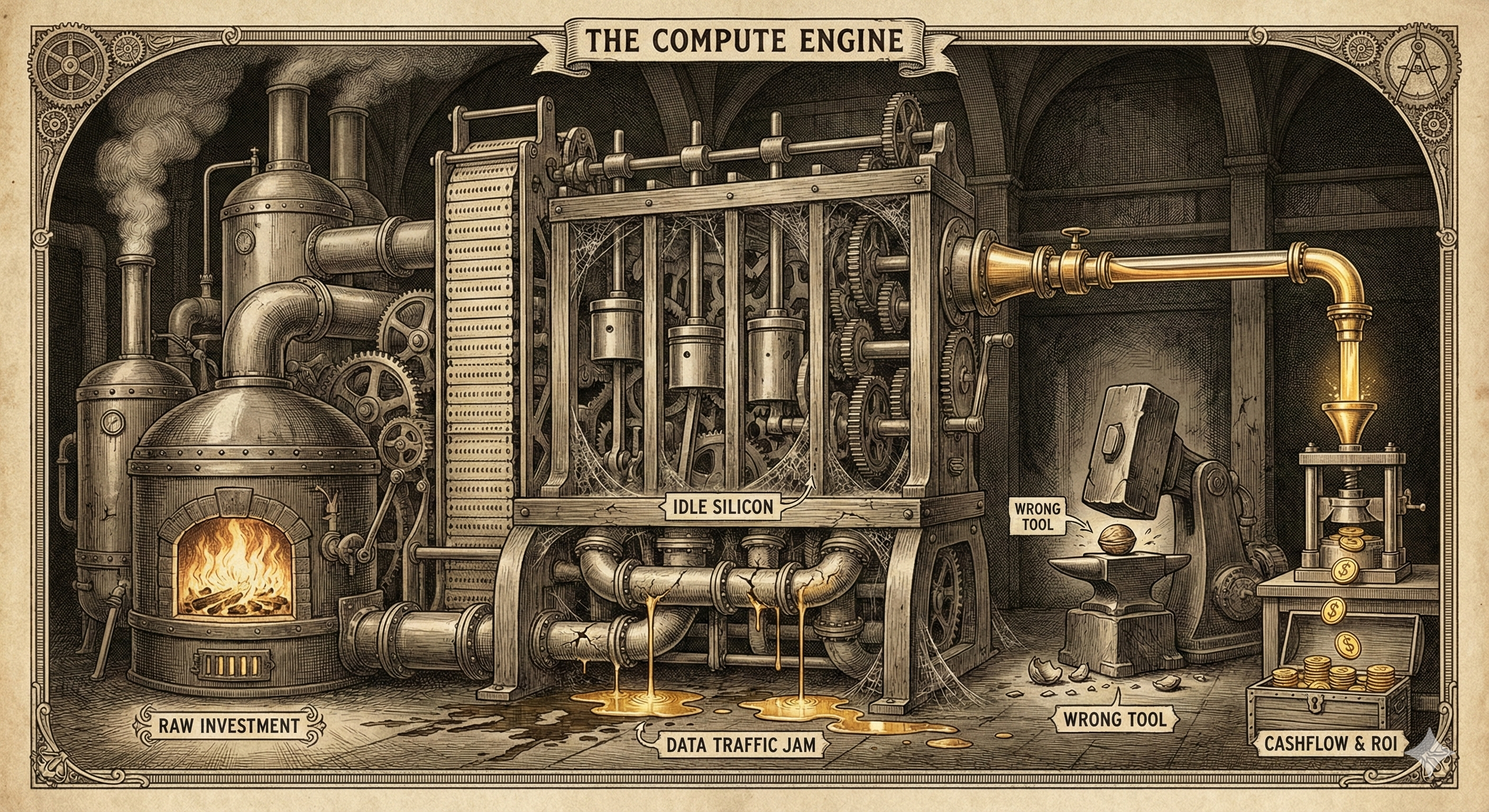

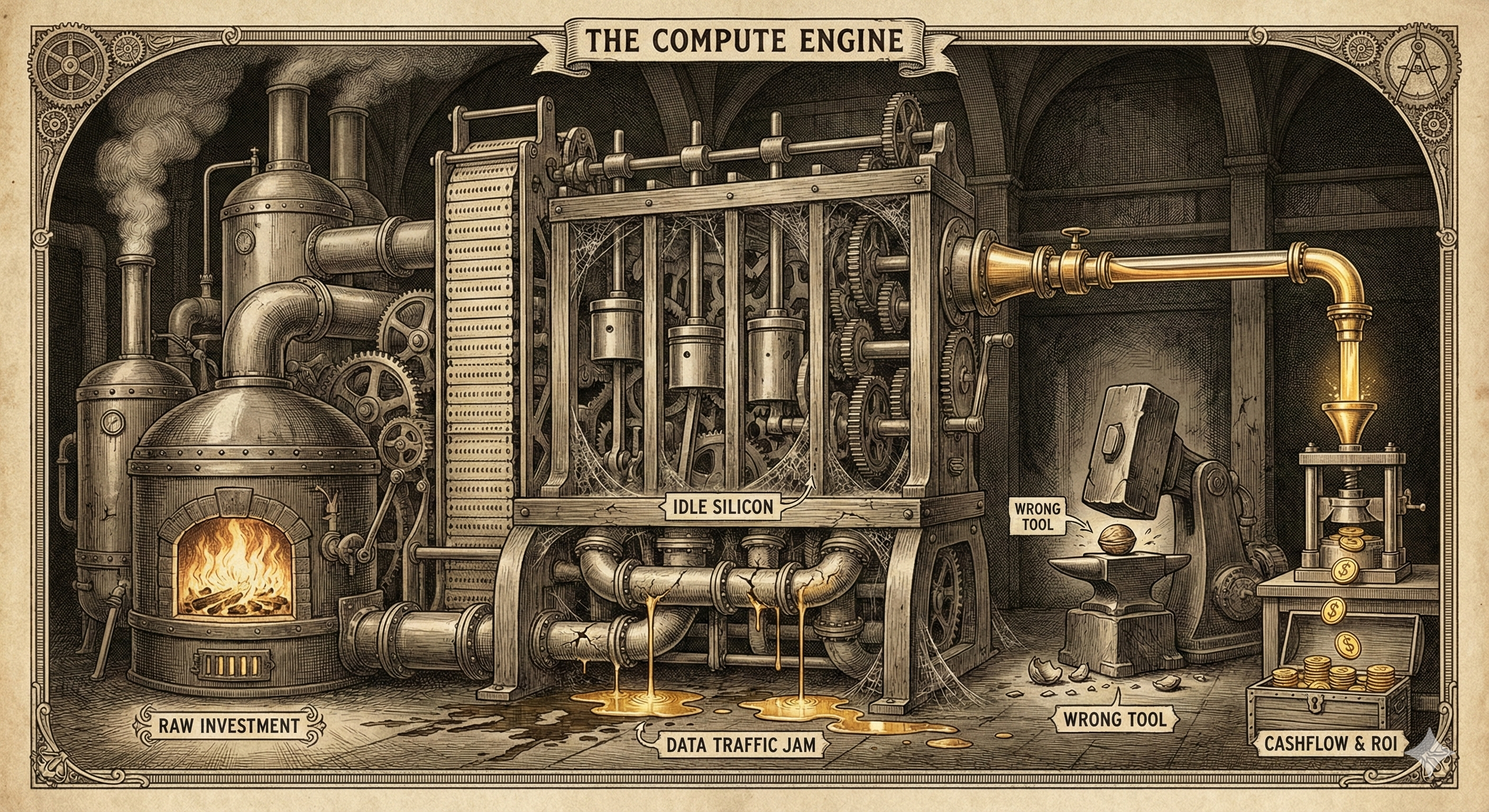

Buying expensive GPUs to wait on cheap storage is an operational failure. We break down the math of 'Badput' and why high-performance I/O is actually a discount.

If your training loop isn't fault-tolerant, you're paying a 40% 'insurance tax' to your cloud provider. We look at the architectural cost of 30-second preemption notices.

When your model doesn't fit on one GPU, you're no longer just learning coding-you're learning physics. We dive deep into the primitives of NCCL, distributed collectives, and why the interconnect is the computer.

The AI industry is shifting from celebrating large compute budgets to hunting for efficiency. Your competitive advantage is no longer your GPU count, but your cost-per-inference.

NCCL debugging is critical for distributed training bottlenecks. Learn to set NCCL_DEBUG, tune the NCCL_ALGO environment variable for Ring, Tree, or CollNet, and troubleshoot GPU network failures.