Squeezing the Inference Lever: The Economics of LLM Throughput

Inference price isn't a fixed cost-it's an engineering variable. We break down the three distinct levers of efficiency: Model Compression, Runtime Optimization, and Deployment Strategy.

Inference price isn't a fixed cost-it's an engineering variable. We break down the three distinct levers of efficiency: Model Compression, Runtime Optimization, and Deployment Strategy.

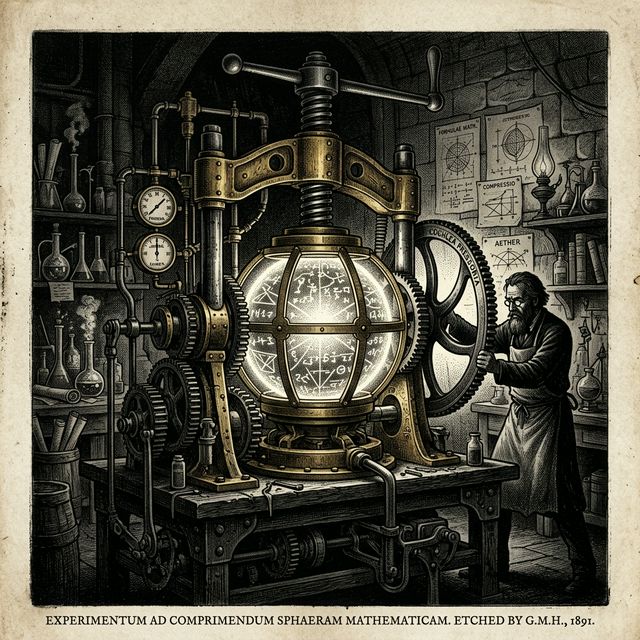

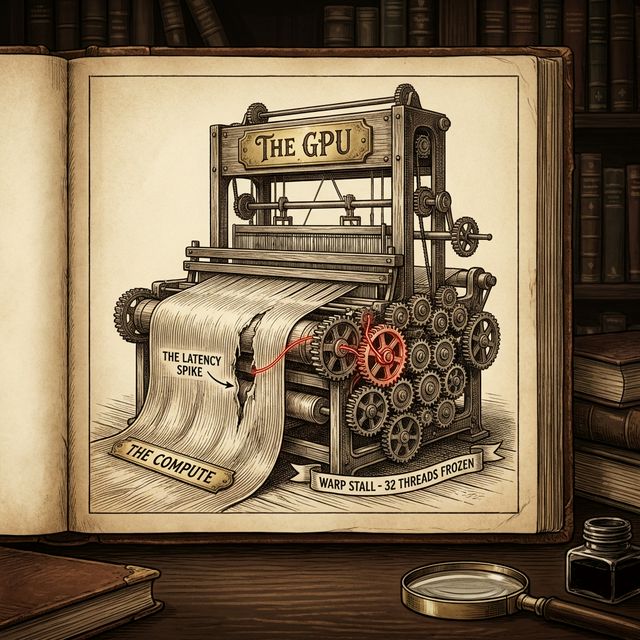

A war story of chasing a 5ms latency spike to a single loose thread. How to read Nsight Systems and spot Warp Divergence.

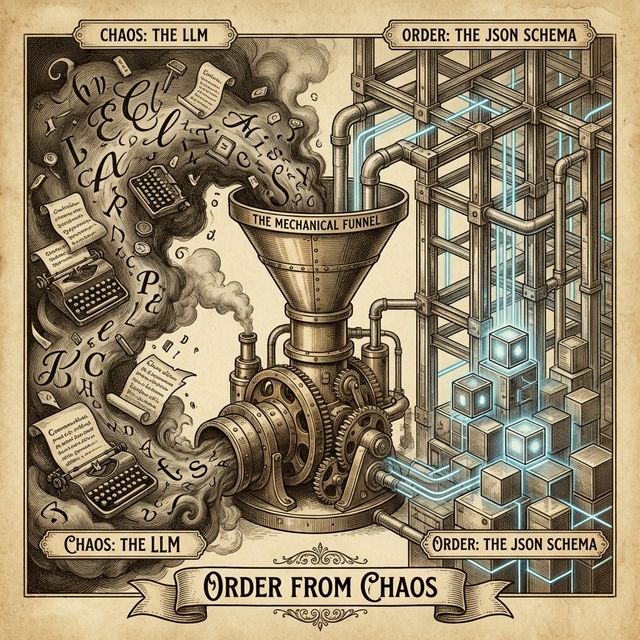

Non-determinism is a bug, not a feature. We explore how to whip the model into compliance using Enforcers, Pydantic, and Constrained Generation.

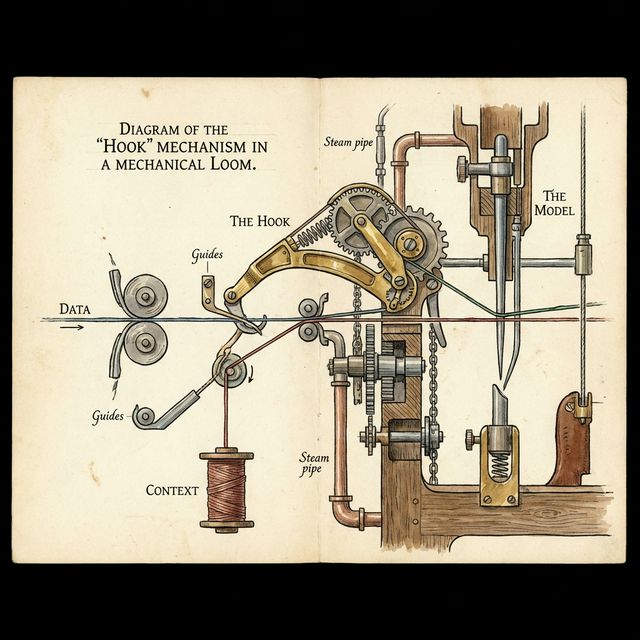

Chat is reactive. Hooks are proactive. We explore how to use Gemini CLI Hooks to inject context and enforce security before the model thinks.

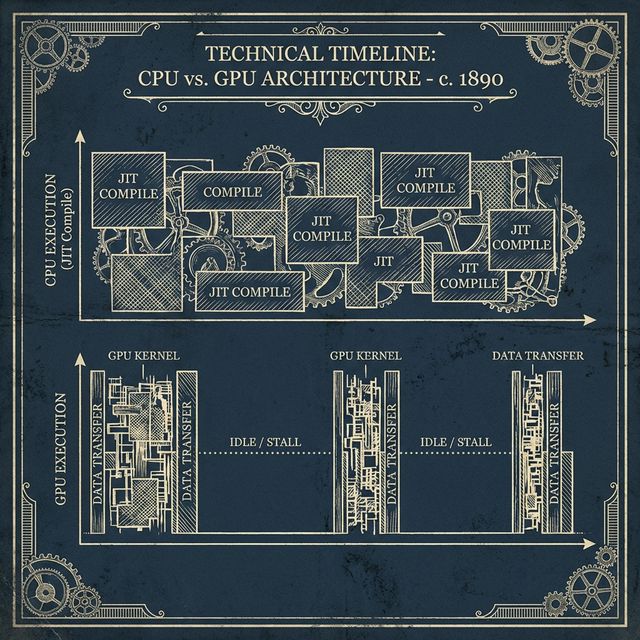

Recompilation is the silent killer of training throughput. If you see 'Jit' in your profiler, you are losing money. We dive into XLA internals.

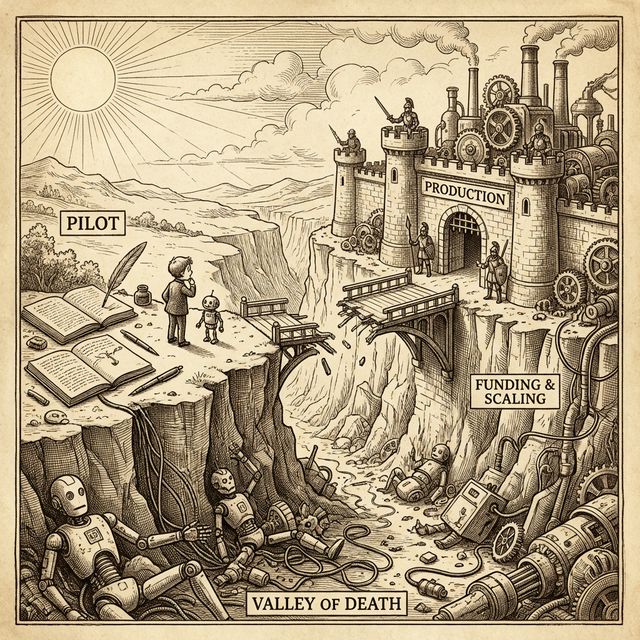

Most enterprise AI fails not because of the model, but because of the 'Last Mile' integration costs. We breakdown the hidden latency budget of RAG.